-

Notifications

You must be signed in to change notification settings - Fork 86

Logbook 2022 H1

- What is this about?

- Newer entries

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- Older entries

-

Wanting to run a babbage-era hydra-node against testnet while it's still Alonzo. Copied scripts from vasil-testnet/ -> testnet/. Should be reusable

-

Synchronizing testnet takes ages.. After synched up, running a babbage hydra-node against it:

[ch1bo][~/code/iog/hydra-poc/testnet][⌥ ch1bo/babbage-era] λ ./hydra-node.sh

hydra-node: HardForkEncoderDisabledEra (SingleEraInfo {singleEraName = "Babbage"})

-

When running the master hydra-node against the testnet, cardano-node was seeming stuck

- restart takes long (replaying blocks)

- For some reason, I can not connect to the hydra-node once it's running (no tui, no ws)

- Receving a

websocket: close 1006 (abnormal closure): unexpected EOF-> works fine on devnet / demo though!? - On Ctrl+c the node prints:

hydra-node: SeedBytesExhausted {seedBytesSupplied = 8}-> exception? - When not trying to send history, the first client input (Init) will have the hydra-node crash with:

hydra-node: SeedBytesExhausted {seedBytesSupplied = 8} - Found it: the hydra keys were not proper / too short and

loadSigningKeyfrom disk failed when forcing it on first use (lazy IO). Plus, the websocket thread died silently.

-

Did a full smoke test run of a single node head on testnet ~ 10 tADA cost?

-

Continuing updates to Babbage, now lately the

outputsof aBabbage.TxBodyseemingly turned intoSized TxOut.. we really should not rely on the ledger but the cardano-api types more. -

We see the

tx-costbenchmark busy loop on some (??) contest transactions- We narrowed it done to serialization of that txs??

- Turns out.. we cannot even show these transactions!?

- After ours we found the issue: genContestTx was looping forever on

suchThatpredicate for thegenPointInTime

-

For some reason, some generated fanoutTx are a lot smaller than others, that is particularly weird if it's for big number of outputs.

- The resulting # of outputs on the tx was not 84, but 2 in one example

- The reason: sometimes the initial snapshot is used to close the head.. we need to separate benchmark from test generators!

- Starting work on #300 by rebasing babbage-preview on top of master again.. it is becoming a pain.

- When continuing the oddysey of upgrading tests to compile with babbage, I encounter a (now?) missing

Arbitrary Praos.Headerinstance?- Asked in #consensus and #ledger, continue with other compile errors

- In the midst of fixing compilation errors there was the need to update cardano-node to latest tag because the old commit is "gone"

- Updated to

1.35.0-rc4 - This led to a barrage of more things changed

- Updated to

- Seems like cardano-api got some more updates because of reference inputs, script witnesses now can be either

PScriptorPReferenceScript - The error types of

Alonzo.Tools.evaluateTransactionExecutionUnitsand the monad ofEpochInfochanged- Instead of fixing

evaluateTxto these new types we could migrate it to the cardano-api's version? - Tried this, but I got stuck in creating a fixture for

eraHistoryfromfixedEpochSize. No brain capacity left..

- Instead of fixing

Changing the representation for Payment so that it dumps the addresses instead of the signing keys which will make it easier to relate to UTxO displayed in the logs: We are not using the Show instance for AddressInEra but the ToJSON one which uses serialise-to-bech32 function

Adding some log to Direct component to display chain time so that we capture some information about the context in which contestation deadline is computed. Looking at fanout deadline issue w/ MLabs team:

- Checking the dates inside each container is correct: :check:

- checking

systemStartin the cardano-node -> genesis-byron.json and genesis-shelley.json -> :check: - Checking the

cardano-cli query tipproduces something that make sense :check:

It seems the currentPointInTime function is wrong: it always gives the same answer whenever it's called because it depends on the slotNo that's retrieved when we invoke the queryTimeHandle function, which happens only once.

FIXED: Turns out this was caused by the major field of genesis-shelley.json being set to 0: It should be 6.

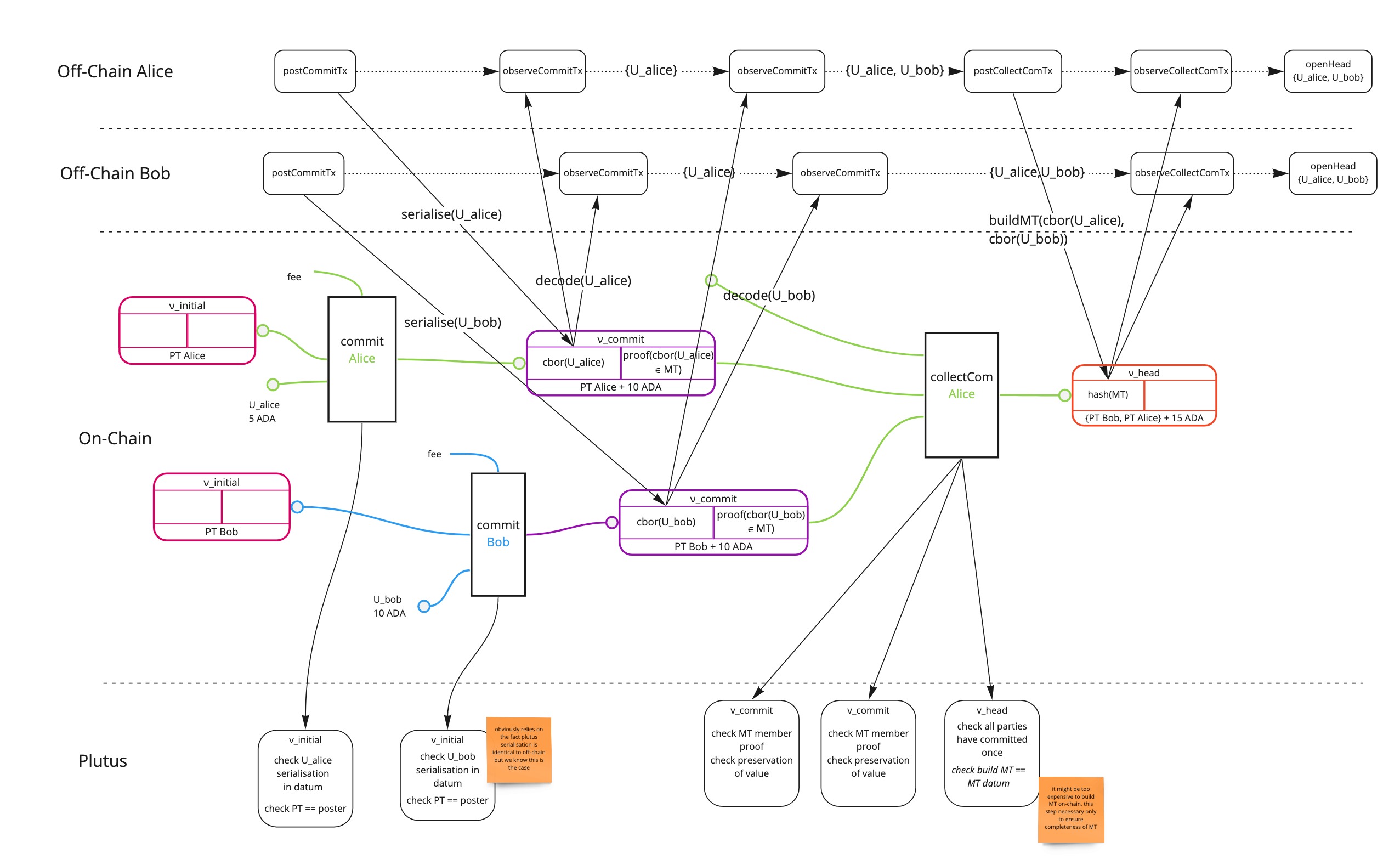

As we are getting closer to "General Availability" of Hydra node and Head protocol, eg. releasing a version (1.0) that is deemed usable for mainnet, we want to increase the confidence in our codebase and verify it correctly implements the Hydra Head protocol. More precisely we want to have a high level of assurance that the Safety Properties stated and proven in the paper indeed hold in our reference implementation. We have been exploring various approaches to, discussing with other teams at IOG and assessing which avenue would benefit us most. This is discussed in the Formalising Hydra page.

As our goal is to provide both on-chain and off-chain validation of our system, we settled on a Model-Based Testing approach using quickcheck-dynamic, the framework initially developed by Quviq for testing Plutus smart contracts. We are aiming at building models and test Hydra Head's properties in at least two flavors:

- One model running in IOSim monad, based only on quickcheck-dynamic's base framework, focusing on the overal correctness of the off-chain part of protocol assuming validity of on-chain code,

- Another model to check the behaviour of the on-chain Plutus code and the

Hydra.Chain.Directcomponent, using not only QD but also Plutus' emulator in order to actually build and validate transactions.

We've already started work on the first model and it already helped us pinpoint some issues in the DX while writing the model.

Working on #388, now trying to rebuild images from latest master in order to spin up a demo environment and check whether or not I can reproduce the behaviour of the bug.

We cannot reproduce the bug with latest master (3c2cca4841a849b0719f97dbebeee39136bf887e):

- We can correctly init/commit/close/fanout a head without any transaction

- We can do the same with one transaction

Working back on #391 picking up where we left and rebasing on master.

We realise the NewTx command should wait until a non-empty UTxO appears which is not the case:

- => we should filter

NewTxin the model to make sure there's a UTxO for the party - The transaction is invalid though, because the amount exceeds what's available for spending

Adding precondition to filter on the possibility of a NewTx leads to the generator looping. Why?

- Adding traces to understand why the model is looping

- It seems the node is looping in the event queue processing, preventing further tx to be processed and outputs to appear

We try resubmitting the same tx several times because from the POV of the model they are different but in the perform they end up being the same because the previous tx has not been confirmed. This highlights an issue in our Node, we should either or both:

- Ensure submitted Tx have a TTL so that we don't keep them indefinitely in the event queue

- Filter tx so that already applied tx are not enqueued

- Adding a waitUntilMathc on

SnapshotConfirmeddoes not fix the issue, the test times out => The faulty tx is never seen confirmed - Seems like the first tx is ok, but the second one is invalid which "explains" why we loop on the second one -> could it be that leader election is wrong in the case of a single node?

Trying to generate head with more than one party => same issue

Tracing the outputs from the node shows that we have CommandFailed which is suspicious -> there should not be any.

- Adding some context to

CommandFailedwould be useful -> adding the faultyClientInput - We probably try to submit a

NewTxin a state that's not correct?

Problem is that we need to wait for HeadIsOpen before submitting NewTx and not after

Seems like checking the opening of the head before submitting solves the infinite looping issue, even though we end up with errors when submitting "similar" tx in a single member head

Other errors we see:

- Negative tx amount in tx: The negative amounts error is caused by shrinking: We don't have any shrinking of the actions themselves but the engine tries to shrink the trace thus potentially discarding intermediate actions. As we don't have a strong precondition on the

NewTxwe can end up in a situation with a non-spendable tx - not matching the UTxo

Adding a precondition removes the negative failure but we still have failure when comparing the states. This is caused by discrepancy b/w model and actual UTxO: we want to have a consisten account-based model using addresses

- Note: cardano-api addresses are not sortable, but ledger's are!

- Seems like our

applyTxis definitely wrong...

We need some improvements on DX/UX:

- Infinite looping on new tx

- Errors reporting

- State observation/sync API on node

The assertion in ModelSpec is wrong: We compare the initial UTxO from implementation (observing HeadIsOpen) with the confirmed UTxO from the model and it's not surprising they are different after a NewTx.

We are still observing timeout failure when a single node runs more than one transaction, we would like to have the node's log as a counterexample

- Adding a

TVarin theTestHydraNodeworks but we have a failure before even the assertion is tested - We need to be able to dump the logs when the

IOSimevaluation fails

Struggling to find a way to dump logs within the ModelSpec. I am trying to leverage the printTrace and shouldRunInSim functions from Util module.

The idea is to look at all the traces generated in IOSim and select those about logging, this works in BehaviourSpec but seems to fail in ModelSpec

- Refactored

printTraceto be pure and return aText, this still works in theBehaviorSpec: Injecting a fault makes the test dump the node's logs, but no logs appear for theModelSpec - Realised that I was not passing the correct tracer -> We now have a dump of logs when the

IOSimmonad fails. This removes storage of logs inside theTestHydraNodewhich thus prevents getting logs ascounterexamplein case the property fails, which is annoying. The problem is that the assertion runs in theStateT (Nodes ..) (IOSim s)monad which implies the trace is not available as we are still running the action.

Seems like we have a clear idea of what's going on:

- When we compute the hash for the

InitialSnapshotwe order them by theTxIncorresponding to theCollectComtransaction, but the UTxO inside in theInitialSnapshotuses the originalTxIns - We need an ordering for the initial snapshot's txOut that's stable across the collectcom/close/dance

- We used to sort by

TxOutbut this has been removed in b82f880d1885

Trying to putback the previous version of hashTxOuts -> still have test failures though...

Proposal:

- Use a single function for computing hash everywhere: There is a

hashUTxOin theIsTxtypeclass, we can change it to output not only the hash but also the (ordered) list of TxOut - This requires to deserialise the TxOut when building the Collectcom function, from the serialised representation in the Datum

Problem: On-chain, we hash using the "natural" ordering of the inputs to the collectCom transaction which corresponds to the output of the commits txs not the utxo we maintain on the L2 which has the TxIns of the input to the commit txs.

Solution: We should "rewrite" the off-chain utxo's txins to the ones consumed effectively by the CollectCom transaction

We modify the observeCommitTx to replace the original txIn with the commitIn corresponding to the txId of the commit transaction. This entails removing a lot of code related to observation of initial inputs which appear useless, but this breaks quite a few tests along the way.

Also, we notice the commit observation does not check the headId which means we observe any commit and only verify the party is part of "current" head -> this should lead to failure in ETE tests with 2 open heads

Adding back the observation of the initials in the observeCommit and erroring on the case where we consume more than one initials yield errors on commit observastion Prop tests -> :thinking_face:

We still have a failing test for the fanout hash: The test fails because of a problem in the tests

- We generate a U0 in

genStOpenand then another (arbitrary) utxo for passing togenStClosed - we always use the latter whether or not the (arbitrary) snapshot to fanout is initial or not, but in the former case we should pass U0

Generating initial snapshots as part of genStClosed breaks the genContest because now the minimum snapshot number can be 0 so we can post a contest with an initial snapshot which is incorrect. -> The genConfirmedSnapshot function is very awkward...

The UTxO we return from the genStOpen is not the one that will end up off-chain, we need to reconstruct the off-chain UTxO from the actual commits

This highlights the fact the current StateSpec is hard to modify and understand, as it's probably to fine-grained and closely tied to the implementation, requiring a great deal of external knowledge

Now the ETE tests fail because the TxIn of the UTXO we expect are incorrect: We need to change the expectations to check the TxOut matches, without the TxIn

Here are the latest failures:

Failures:

test/Test/DirectChainSpec.hs:292:26:

1) Test.DirectChain can init and abort a 2-parties head after one party has committed

expected: OnCommitTx {party = Party {vkey = HydraVerificationKey (VerKeyEd25519DSIGN "38088e4c2ae82f5c45c6808a61a6490d3c612ce1da235714466fc748fbc4cbbb")}, committed = fromList [(TxIn "80e9db7411365a3fce90cfb6cbba9c7c8a246ec27a67fc1b8ccd1b739bcac763" (TxIx 1),TxOut (AddressInEra (ShelleyAddressInEra ShelleyBasedEraAlonzo) (ShelleyAddress Testnet (KeyHashObj (KeyHash "f8a68cd18e59a6ace848155a0e967af64f4d00cf8acee8adc95a6b0d")) StakeRefNull)) (TxOutValue MultiAssetInAlonzoEra (valueFromList [(AdaAssetId,66000000)])) TxOutDatumNone)]}

but got: OnCommitTx {party = Party {vkey = HydraVerificationKey (VerKeyEd25519DSIGN "38088e4c2ae82f5c45c6808a61a6490d3c612ce1da235714466fc748fbc4cbbb")}, committed = fromList [(TxIn "4d21d53e885c81f3b4227fd6cec2eff09d908ac425381830cf0b4f2a7933bf2a" (TxIx 0),TxOut (AddressInEra (ShelleyAddressInEra ShelleyBasedEraAlonzo) (ShelleyAddress Testnet (KeyHashObj (KeyHash "f8a68cd18e59a6ace848155a0e967af64f4d00cf8acee8adc95a6b0d")) StakeRefNull)) (TxOutValue MultiAssetInAlonzoEra (valueFromList [(AdaAssetId,66000000)])) TxOutDatumNone)]}

To rerun use: --match "/Test.DirectChain/can init and abort a 2-parties head after one party has committed/"

Replacing the shouldBe assertion in DirectChainSpec with a observesInTimeMatching so that I can pass a predicate, then defining a predicate that checks OnCommitTx irrespective of the TxIns.

- The problem is that I would like to ensure the

TxOutlists are the same without taking care of the ordering, but there is noOrdinstance forTxOut=> need to check inclusion in both directions, one by one - As expected our change that "rewrites" the UTXO to replace the original

TxInseverely impacts the ETE tests as we are not able anymore to assume what we commit is put into the head as is, and prebuild transactions from those UTxO. This is really annoying from a test-writing and probably a user perspective

Writing an ETE test to expose the problem with immediately closing a Head from U0, something we should have done from the get go

- What we really want is not replacing the

TxInbut computing the UTXO hash by ordering the commits according to their original TxIn, not the ones - when we observe the commits we accumulate in the state the commitOutputs which are the things we will spend in the collectComTx, and we compute the utxo Hash on the ordering of these commit outputs extracting the encoded TxOut. The committed outputs are just reported up to the HeaDLogic but not accumulated so that when we create the

CollectComTxwe only have available thecommitOutputsand not thecommittedUTxO to compute the hash.

Struggling to fix the tests after adding the committed UTxO as argument to collectCom: We now need to return those generated UTxO which once again highlights how cumbersome it is to work with tuples...

Not all tests are passing! I now see errors in the collectCom specific tests and the ETE tests fail. Why?

- The failures in the

ContractSpecare somewhat logical: We reconstruct the hash from the commit outputs using their ordering and it's different from the one used incollectComnow.

Idea: Include the TxIn (or TxOutRef) into the commit datum so that we can sort on it when checking the txOutHash

- I am stuck trying to put

TxOutRefin the commit datum in order to be able to sort it properly in theHeadscript. -

TxOutRefis not anOrdinstance... Trying to implementOrdforTxOutReffailed for some mysterious reason: Got a full PLC dump telling me some function was notINLINEABLEwhich seemingly was related to myOrddeclaration but unclear why - Just use a custom sorting function

Adding the TxOutRef on-chain and using that to sort the commits before hashing solves our issues:

- Consequence is that we can keep the same UTxO from L1 in L2 as U0 which is better DX, at the expense of the sorting and storage cost in the script.

Pairing Session - #375

Did some cleanup on the Model and ModelSpec:

- Removed not needed

WrapIOSimmonad - Cleanup unneeded functions for casting and indirection in tests

- Make a single state in

WorldState - Simplify

Actionto have a singleCommandwrapping aClientInput. This is made possible thanks toactionNamewhich is a method fromStateModelclass that allows fine tuning of actions classification - Also improved

preconditionto reject by default

We want to complete the Model to address the full lifecycle of the Head protocol.

- Now tackling what happens in

Openstate, starting with generatingNewTxcommand: We select one random party, then lookup a UTxO it can spend and generate a transaction from it, usingmkSimpleTx. We consume a UTxO we own, and send the value to some address owned by someone else in theHead - Interestingly, while trying to write the

NewTxcommand we realise we need to abstract the model away from the implementation details: A new transaction should be expressed, in the model, asAlice -[ 10 ]-> Bobeg. a simple payment transaction, leaving the details of the construction of the actualTxto theperform. This highlights the fact theModelis really, well, a model and in our case it embeds some assumptions about the "use case".

We introduce a Payment type that will be used as a parameter for ClientInput in the Action datatype.

- Working on a

Paymenttype, implementingIsTxtypeclass and updating the generators - We need to converet from our

PaymentUTxO to the standard UTxO which requires aTxIn, but we cannot invokearbitraryin theActionMonad - We can use the vk as the raw source of bytes for the txId because the latter is a hash encoded with

blake2b_256hence has 32 bytes, whereas the hash for a vk is a blake2b_224 (28 bytes).

Writing a proper model requires some logic to represent the beahviour of the ledger. Using an account based ledger assumes we always consume full UTxO.

We have updated the tests in accordance with the new model which required some simplifications of UTxO to be able to compare the states. But we now get errors because we cannot generate a payment:

test/Hydra/ModelSpec.hs:34:5:

1) Hydra.Model implementation respects model

uncaught exception: ErrorCall

no UTxO available for payment.

CallStack (from HasCallStack):

error, called at src/Relude/Debug.hs:288:11 in relude-1.0.0.1-FnlvBqksJVpBc8Ijn4tdSP:Relude.Debug

error, called at test/Hydra/Model.hs:356:21 in main:Hydra.Model

(after 15 tests and 3 shrinks)

Actions

[Var 1 := Seed {seedKeys = [(HydraSigningKey (SignKeyEd25519DSIGN "4d5572d174ba0000000000000000000000000000000000000000000000000000eadad991ccb48abc739cf6802fdfa52776977d504d4cd8a5dd01d1a9875af5d8"),"0302020103020601080801020500020805060505070500040803080000040205")]},

Var 2 := Command {party = Party {vkey = HydraVerificationKey (VerKeyEd25519DSIGN "eadad991ccb48abc739cf6802fdfa52776977d504d4cd8a5dd01d1a9875af5d8")}, command = Init {contestationPeriod = 12.333369372475s}},

Var 3 := Command {party = Party {vkey = HydraVerificationKey (VerKeyEd25519DSIGN "eadad991ccb48abc739cf6802fdfa52776977d504d4cd8a5dd01d1a9875af5d8")}, command = Commit {utxo = [("0302020103020601080801020500020805060505070500040803080000040205",valueFromList [(AdaAssetId,10607997295420064185)])]}},

Var 6 := Command {party = Party {vkey = HydraVerificationKey (VerKeyEd25519DSIGN "eadad991ccb48abc739cf6802fdfa52776977d504d4cd8a5dd01d1a9875af5d8")}, command = NewTx {transaction = Payment {from = "0302020103020601080801020500020805060505070500040803080000040205", to = "0302020103020601080801020500020805060505070500040803080000040205", value = valueFromList [(AdaAssetId,10607997295420064185)]}}}]

This is caused by the UTxO not being available in the Head, which might come from the fact we have not yet observed the head being opened?

AB Solo - #388

Following discussion with MLabs team on how to setup an ETE test environment, they showed again they were having trouble with posting Fanout: https://github.com/input-output-hk/hydra-poc/issues/388

- Run the demo with locally built docker images and see if I can reproduce the problem

- Add logs related to time observation and deadline

Trying to close -> fanout without creating any transaction gives me this error:

fannedOutUtxoHash /= closedUtxoHash

when posting the fanout transaction

Looking for the remainingContestationPeriod retrieved from the close tx, I can see it's mostly correct:

remainingContestationPeriod":20.686456384,"tag":"OnCloseTx","snapshotNumber"

The end of the effect processing and the subsequent ShouldFanOut event are correctly 20s apart:

{"timestamp":"2022-06-09T14:46:41.614095732Z","threadId":87,"namespace":"HydraNode-3","message":{"tag":"Node","node":{"tag":"ProcessedEvent","event":{"tag":"OnChainEvent","chainEvent":{"contents":{"remainingContestationPeriod":20.686456384,"tag":"OnCloseTx","snapshotNumber":0},"tag":"Observation"}},"by":{"vkey":"accac8a5f014fa4a5f012e9fc13f2788f63d2ebccb8b416d496a64a1a3eb61c6"}}}}

{"timestamp":"2022-06-09T14:47:02.301681216Z","threadId":87,"namespace":"HydraNode-3","message":{"tag":"Node","node":{"tag":"ProcessingEvent","event":{"tag":"ShouldPostFanout"},"by":{"vkey":"accac8a5f014fa4a5f012e9fc13f2788f63d2ebccb8b416d496a64a1a3eb61c6"}}}}

The UTxO that's posted by Fanout:

{

"3eeea5c2376b033d5bdeab6fe551950883b04c08a37848c6d648ea03476dce83#1": {

"address": "addr_test1vru2drx33ev6dt8gfq245r5k0tmy7ngqe79va69de9dxkrg09c7d3",

"value": {

"lovelace": 1000000000

}

},

"6db235b8759454654d19baf3ef601a2cb0e4ea3ebdc5e9db466dd07bccf53c7d#1": {

"address": "addr_test1vqg9ywrpx6e50uam03nlu0ewunh3yrscxmjayurmkp52lfskgkq5k",

"value": {

"lovelace": 500000000

}

},

"0ad134cc87cdf66a6863464b4393501eda7632f7c268b068c48b2aaf84f20e51#1": {

"address": "addr_test1vqa25t3aayfmpad20elswmsj94ehmdfjnhc64yz3jg5yl6skf5cck",

"value": {

"lovelace": 250000000

}

}

}

is identical to the initial UTxO reported by HeadIsOpen so the problem probably lies in the hashing functions?

{

"3eeea5c2376b033d5bdeab6fe551950883b04c08a37848c6d648ea03476dce83#1": {

"address": "addr_test1vru2drx33ev6dt8gfq245r5k0tmy7ngqe79va69de9dxkrg09c7d3",

"value": {

"lovelace": 1000000000

}

},

"6db235b8759454654d19baf3ef601a2cb0e4ea3ebdc5e9db466dd07bccf53c7d#1": {

"address": "addr_test1vqg9ywrpx6e50uam03nlu0ewunh3yrscxmjayurmkp52lfskgkq5k",

"value": {

"lovelace": 500000000

}

},

"0ad134cc87cdf66a6863464b4393501eda7632f7c268b068c48b2aaf84f20e51#1": {

"address": "addr_test1vqa25t3aayfmpad20elswmsj94ehmdfjnhc64yz3jg5yl6skf5cck",

"value": {

"lovelace": 250000000

}

}

}

The outputs of the transaction:

{

"3eeea5c2376b033d5bdeab6fe551950883b04c08a37848c6d648ea03476dce83#1": {

"address": "addr_test1vru2drx33ev6dt8gfq245r5k0tmy7ngqe79va69de9dxkrg09c7d3",

"value": {

"lovelace": 1000000000

}

},

"6db235b8759454654d19baf3ef601a2cb0e4ea3ebdc5e9db466dd07bccf53c7d#1": {

"address": "addr_test1vqg9ywrpx6e50uam03nlu0ewunh3yrscxmjayurmkp52lfskgkq5k",

"value": {

"lovelace": 500000000

}

},

"0ad134cc87cdf66a6863464b4393501eda7632f7c268b068c48b2aaf84f20e51#1": {

"address": "addr_test1vqa25t3aayfmpad20elswmsj94ehmdfjnhc64yz3jg5yl6skf5cck",

"value": {

"lovelace": 250000000

}

}

}

are precisely the expected ones

Trying to run the demo and fanout with one transaction in the head

- Fanout worked on demo with one transaction in the head so most probably the problem I am observing is only present when closing with

initialSnapshot.

Trying again on demo setup to see if the same problem occurs

- Retrying to close a Head without any transaction yields me the same error: The hashes do not match between the fanout and the close

When we create the closeTx we compute the utxo hash in 2 different ways:

- For the initial snapshot, we take the hash that's been observed from the

CollectCom - For other snapshots, we compute it

Hypothesis for #395: The decoding from CollectCom or computing in the collect com is invalid.

Trying to validate hypothesis by not using the openUtxoHash from teh state but recomputing on the fly from the InitialSnapshot

- Interestingly, changing the way the hash is computed in the initial snapshot cases makes a test fail. There's even a comment suggesting to have better classification for errors 😄

forAllClose action = do -- TODO: label / classify tx and snapshots to understand test failures

This failure seems once again to point to the way we compute the hash in CollectCom which is somewhat odd:

utxoHash =

Head.hashPreSerializedCommits $ mapMaybe (extractSerialisedTxOut . snd . snd) orderedCommits

orderedCommits =

Map.toList commits

Could it be that Foldable.toList is not equivalent to Map.toList? => :no:

Looking at the transaction that's generated it seems the utxoHashes are inconsistent.

-

The OnChainHeadState has

openUtxoHash = "\240\194\v\241o\219j\FS\191\253\235\157C\190\241 \215m\FS\219\155\230\vQ,\156\241{\150\ETX\228\252" -

The transaction has a datum with encoded

Openstate of:DataConstr Constr 1 [ Constr 0 [I 0] , List [ B ";j'\188\206\182\164-b\163\168\208*o\rse2\NAKw\GS\226C\166:\192H\161\139Y\218)" , B ";j'\188\206\182\164-b\163\168\208*o\rse2\NAKw\GS\226C\166:\192H\161\139Y\218)" , B ";j'\188\206\182\164-b\163\168\208*o\rse2\NAKw\GS\226C\166:\192H\161\139Y\218)" ] , B "\240\194\v\241o\219j\FS\191\253\235\157C\190\241 \215m\FS\219\155\230\vQ,\156\241{\150\ETX\228\252" ] -

However the closed state is

DataConstr Constr 2 [ List [ B ";j'\188\206\182\164-b\163\168\208*o\rse2\NAKw\GS\226C\166:\192H\161\139Y\218)" , B ";j'\188\206\182\164-b\163\168\208*o\rse2\NAKw\GS\226C\166:\192H\161\139Y\218)" , B ";j'\188\206\182\164-b\163\168\208*o\rse2\NAKw\GS\226C\166:\192H\161\139Y\218)" ] , I 0 , B "\US\145\166+\232\n\CAN\170\209\213o6\DC2\148\EM\238>\158\240\145\205\251\237\204\GS\167)\ESC\205\204\213\&8" , I 91814000 ]

which shows the hashes are not the same

Side remark: Seems like Babbage has provision for starting up a cardano node with stakes: https://github.com/input-output-hk/cardano-node/blob/95c3692cfbd4cdb82071495d771b23e51840fb0e/scripts/babbage/mkfiles.sh#L111

Interestingly, we do not tests the case of fanoutting an initialSnapshot:

-- FIXME: We need a ConfirmedSnapshot here because the utxo's in an

-- 'InitialSnapshot' are ignored and we would not be able to fan them out

confirmed <-

arbitrary `suchThat` \case

InitialSnapshot{} -> False

ConfirmedSnapshot{} -> True

I was able to reproduce the failure in a unit test in StateSpec by specifically removing the filter when generating snapshots to fanout that excluded InitialSnapshot.

A short presentation to the Architecture & Engineering chapter

Sticking to agile principles:

- Working software over Comprehensive Documentation

- People and Interactions over Process and Tools

- Responding to Change over Following a Plan

(We don't really have customers :) )

- Writing tests first is a great way to expose and make explicit the architecture

- Outside-in TDD, start from ETE tests and "drill down"

- Property-Based testing wherever possible

Collaborative diagramming with Miro

Architectural Decision Records:

- Record major decisions impacting the design & arch of the system

- Discuss possibly contentious choices within the team

Pairing - #375

Enhancing the model to wait for specific events to happen when performing -> We reuse the waitForXXX logic from BehaviorSpec tests

Now tackling definition of "interesting" property on our Model:

- The general idea is to check that the model and the real state of nodes are consistent with each other

- We start checking the committed utxo are consistent. This requires the ability to observe the current state of the nodes at the point where we check the property -> We store all the nodes' outputs in the

TestHydraNode

The assertion fails, most probably because the state we observe on the actual nodes is not updated because the events have not been processed

- We need to wait for nodes to reach a quiescent state, eg. to drain the event queue. We use

threadDelayto wait for event queue to be drained which might not be very robust

Assertion still fails:

- We modify the

outputHistoryat the wrong place, when wewaitNextso we never get what we expect

Modifying the TestHydraNode machinery to observe the list of ServerOutput emitted by the node -> tests are green 🎉

We now want to be sure we are actually covering the states and not only the alphabet (the Action ctors):

-

This can be achieved by implementing the

monitoringfunction andtabulateorlabelwith pair of states. -

We realise the

WorldStateis the same for all nodes, as it represents the consensus that should be reached by hydra nodes through some exchanges of messages and posting of txs. -> refactor it to be a single state -

Write the

monitoringfunction to ensure we cover theOpenstate. Why do we get multiple classification of transitions:Hydra.Model implementation respects model +++ OK, passed 1000 tests: 85.7% Idle -> Initial 33.9% Initial -> Initial 7.9% Initial -> Open 9.8% Initial -> Initial 3.1% Initial -> Open 2.0% Initial -> Initial 0.9% Initial -> Open 0.3% Initial -> Open 0.2% Initial -> Initial -

This is probalby tied to the number of actions generated? Increasing the number of parties and the max. success increases the number of groups of transitions, confirming the hypothesis that the

monitoringis somehow linked to the length of traces generated.

Problem: The generators are rather blind to the current state, so a lot of actions are discarded and the probability of discarding increases as the length of the trace increases. It's unclear however how the percentages are computed.

AB Solo Programming - #375

Managed to have a running ModelSpec thanks to Quviq's team help:

- Created a separate repository to not have to depend on plutus-apps, with latest changes from Quviq removing

Typeableconstraint on theStateModel - Had a remaining issue was the

Typeable mconstraint on theStateModelinstance forWorldState m

Now trying to extend the Model to handle more transitions (Eg. commits, off-chain transactions, closing...) but I am still running in an issue: the runIOSim function throws a FailureDeadlock error which seems somewhat odd to me.

- It deadlocks because code calls

runHydraNodewhich is aforeverloop 🤦 - Spawning the nodes using

async: This is fine because we run inIOSimso there's no risk of leaking threads - Now hitting a problem with the shrinker failing on

elementson an empty list which raises an error

Of course, generator also depends on the state but the precondition needs an Action to be generated so it cannot apply as is => there's some duplication in the State to determine when something can be generated and then validated

Adding Abort and Commit to the actions, with some "refactoring". The coverage report from the test run still says only Seed and Init transitions are generated and tested so there seems to be a problem in the generator or the precondition?

Got a passing test that generates a whole bunch of sequence of actions, now trying to define some interesting properties to check.

- This requires to compare the model's state with the actual state produced by implementation, but how do I get hold of the latter?

- Also better to have distinct constructors for each

Actionas this is displayed in the coverage report and it makes showing transitions more explicit.

Coverage transitions show only Seed and Init transitions: Commit are generated and performed but they probably produce some kind of error because they are only meaningful when a head is initialised, so we need to wait for the correct state to be observed by the node executing the action, just like we do in the BehaviourSpec.

- Inlinable changes and renames in plutus scripts do not change script hashes

- While working on refactoring checkCollectCom I wonder: Is there sharing in Plutus semantics? Strictly evaluated it might happen that an expensive fold is done twice if the value produced is used twice in an expression?

Working on refactoring Wallet module to simplify and make it "synchronous": It would be updated using the same chain following logic than the Direct module.

- We introduce a handle to replace the need for a connecting directly from the

Walletto the node, which allos us to replace the use ofMockServerin WalletSpec - We simplify the

updatefunction of theWalletto remove the need of having a conditional: We always log the application of block, which removes the need for testing specifically it -

Walletmodule is dependent on ledger types but we would like to migrate to use cardano API types - We introduced some machinery to record and check the point at which the

ChainQueryfunction is called - Still have

DirectSpectest failing but we probably don't need it anymore

There might be interesting property tests to write agains the Wallet reusing the machinery from StateSpec: Ensure that given a "chain" and a sequence of rollbacks and roll-forwards, its state is consistent with the chain?

The ChainSyncClient interface is really an Observer of the chain which is implemented by 2 things: The handlers to propagate events to the HeadLogic and the Wallet to handle internal UTxO to spend. We could unify the two in a composite Observer which simplifies the implementation ot just propagate rollbacks and rollforwards.

We finally can ditch the MockServer and teh DirectSpec test which was the only remaining use of it: It fails and the tested behaviour is covereted by tests in hydra-cluster.

Converting Model code I wrote to use quickcheck-dynamic.

- The

Action state atype as an additional type variable which seems to be used to represent the fact an action produces a value of typeaon top of chaning thestate, like in this example for a thread registry. - The

performfunction produces a value of typeawhich is stored in aVar aand passed to thenextStatefunction to update thestate. This makes the model's actions independent of the details of the values produced - The

StateModelclass requires definition of anActionMonadwhich is pinned down for execution ofperformfuncftion - This type cannot be parameterised (it's a type alias, or an associated type family) so it's not possible to say

type ActionMonad GlobalState = forall s . IOSim s

I wish the model and concrete states were separated instead of conflated in the same typeclass. Ideally, we want to assert there is an homomorphism between the state transition function the model defines and the actual state transition of the code, which requires some way of mapping states between the 2 functions?

Got a compilable StateModel for a Hydra off-chain network:

- The solution to the

ActionMonadproblem is easy: Leave it as a type variablem, adding the needed constraints. TherunActionsproperty is parameterised over that monad anyway, so it's a simple matter of running in IOSim. - Need to provide a suitable

Showinstance for theHydraNodeif stored in theWorldStatebut there's a way to not store them there but only as the outcome of actions, using theVarfrom the first step - Looking at

runActionsmakes it clearer what's intended:- A sequence of actions is generated, using the

nextStateas a transition function and passing aVar nwhere n represents the step number, incremented at each step - This sequence of action is run by

- checking the

preconditionof the actual state is ok -

performthe action passing it the variable looked up from the environment (searched by type) - the result is stored in a new environment

- the

postconditionfor the action and the starting state is checked, passing it the result of perform step

- checking the

- A sequence of actions is generated, using the

Submitted a few ideas from previous days' discussions:

Writing mutation to check we properly count the PT when aborting, so that we ensure the head is properly closed with the correct initials and commits

- It's actually quite involved: We need to forge an initial UTxO with the same asset name buut a different policyid to represent the fact we are consuming a UTxO from a different head

- Negative est is not failing, which means we validator rejects the transaction for the wrong reason

- Turns out the problem came from using the wrong redeemer: We use the name

Abortas a constructor name for bothInitial,Commit, andHeadscript Possible solution: Add some index usingunstableMakeDataIndexed

We manage to make the test pass, just checking we burn all head tokens, including PT and ST

We could refactor on-chain contracts to not use initial adn commit address and only look at the head id but this is mostly straightforward and code shuffling. We choose instead to have the off-chain observeAbortTx retrieve the Head id so that State code can reject observation from aborts which are not from our head.

- Ideally, this decision should be propagated upper up, in the Head Logic which would keep the

HeadId

Write a test for checking an abort tx for another head is not observed, but surprisingly it passes!

- It happens that the

OnChainHeadStatetracks a single head implicitly in the UTxO it keeps around, hence it enforces the behaviour we expect but in a not very obvious and intuitive way. - What we would like is for the observations to just observe any Head relevant tx and have an upper layer do the filtering

AB Solo - Model-Based Testing - #375

Need to create a bunch of HydraNodes from parameters when starting the World and maintain a mapping from a Party identifier to the node to act with the proper actor.

- This is already something we do in the

BehaviorSpecso there are probably things to refactor and share. In particular, thewithHydraNodeis not at all generic in the type of transactions, and the chain implementation so we should probably refactor that.

I would like to untangle the mini-protocols handling from the actual transactions handling from Direct and Wallet so that we can implement a "mock" chain handling cardano Tx.

- The

TinyWalletshould probably disappear or be externalised as a basic wallet that's provided out-of-the-box but can be replaced by any other wallet so that users can plug in their own wallets -> this would enable things like External Commits or non-custodial -

TinyWalletexposes 3 functions:coverFee,getUTxOandsignbut actually, onlysignis useful outside of the wallet. The other 2 are used only to provide some feedback to the user and throw specific exceptions in case the wallet cannot cover fees, this could be completely encapsulated in theTinyWallet - The

coverFeefunction uses a differentUTxOthan the wallet hence why it's needed there: We pass the "headUTxO" which is the UTxO representing the head state machine output, but this could part of thesigninterface.

Creating nodes for running model against requires:

- Connecting nodes through a network, which can be done reasonably easily with channel

- Connecting nodes to a chain, which should implement the whole logic of actually posting transactions and observing them from the chain

- This is important because it is required to exert the on-chain contracts and check global properties of the protocol

- creating queues to put outputs in, and consume them from the model

The Model needs to represent the behavior of a full network, which means it needs to maintain the observable state of all the involved parties.

- This makes the

interpretpart trickier: The model expresses expected changes according to some inputs

Reading more of Contract testing article, to understand how it deals with the problem of generating sequence of actions: You actually cannot generate a sequence blindly, with each actor emiting actions without taking care of what's happening with other actors.

- Reading https://github.com/input-output-hk/plutus-apps/blob/main/quickcheck-dynamic/src/Test/QuickCheck/DynamicLogic.hs code which contains the core logic for

quickcheck-dynamictests - Peeking at Quviq's work on Hydra from a branch in their clone. As expected, they are testing at the level of the contracts interface, not at the level of the Hydra nodes which would be probalby more interesting to us

We should be able to deduce the expected "quiescent" state from the client's input only, shouldn't we? Up to some possible interleaving of actions and assuming we always wait for events to propagate fully, we should be able to know what the resulting state is.

Extracted PR #376 as a baby step towards being able to run a full (off- + on-chain) model of Hydra Head.

Start implementing a model for the Hydra protocol, taking into both on- and off-chain behaviour, following https://plutus-apps.readthedocs.io/en/latest/plutus/tutorials/contract-models.html#

- Worked on a 'Model' module tying together various parts of the system. The problem, as always, is how to not duplicate what's already implemented, eg. how to work at a level which is abstract enough that we can interpret the actions differently, in a simpler model, than the actual code.

- It's better to model the system abstractly, somewhat like the

HeadLogicdoes, and maintain a translation layer that's just concerend with mappingPartyto cardano keys and handle the details of interacting with the chain - What would be nice is to be able to decouple the construction and observation of transactions in our code, located in the

TxandStatemodules, from theDirectmodule, eg. to be able to emulate the chain using an internal ledger rather than the actual chain. This would make it possible to run our tests in theIOSimmonad which would make them way faster.

Modelling the Hydra Head makes more sense at the toplevel, through the Node's API. If we want to do this efficiently, eg. so that we can run the model in IOSim we would need to emulate both the network layer and the chain layer.

- The network layer can be easily modelled using something similar to what's been implemented for hydra-sim a while ago: A set of channels connected to a central dispatcher, as it's also implemented in the

BehaviorSpec - The chain layer require a bit of work because we want to ensure we exercise the actual transactions and contracts, which means we want to use most of the

Hydra.Chain.Directcomponents but without the networking and real interaction with a node part, which is not very complicated but requires a bit of work esp. as the coupling with networking is quite intricate in those components

Some interesting feedback from Pi@SundaeSwap:

- Things they wish they had (when integrating with cardano-node, somewhat also applicable to Hydra):

- Dry-run transaction submission with comprehensive errors;

- Better error messages / pretty-printer for errors;

- More comprehensive health metrics;

- Ability to inspect the mempool / pending transactions;

- Ways to build transaction more easily, especially in the presence of native assets;

- More comprehensive specifications of intermediate representations used by cardano-cli (e.g. unsigned transactions);

- Have tools work 'offline' when they can, and not require a connection to a running node for every single command (in cardano-cli, this is mostly due to the need to request protocol parameters for various commands, but parameters could be user-provided on the cli instead);

And for Hydra more specifically:

- Gives way to query L2 protocol parameters from the hydra-node, mostly for off-chain code to use and not worry about carrying around configuration files.

- Add a ReadyToFanout server output

- Roundtrip time of tui spec not good, so diving down two layers -> BehaviorSpec

- Extending the existing fanout behavior test should be enough

- Could fix the HUnit failure not correctly rendered from within shouldRunInSim along the way

- Printing the traces in shouldRunInSim was actually the problematic bit, we need to catch exceptions when trying to process the trace events!

- When adding a

Fanoutclient input I realize there is even aContestclient input which we are not handling that right now! - After only adding the client input all tests pass.. which was slightly surprising, but I guess having a property enumerating correct handling of all inputs in the right state is probably a bit too hard to do (right now).

- Let's start outside in by updating the TUISpec

- While it is feels weird to just wait and issue a command in the tui (without visual feedback), it becomes quite obvious that we need an additional

HeadStatewhen it comes to handling theFanoutclient input. How would theHeadLogicknow otherwise when to handle / not to handle a fanout? As I'm typing this out, we could just route it to the chain layer and return an error if too early. Let's start with that. - After hacking the TUISpec to expect the right thing at the right time, it lacks granularity to express that fanout is only done by clients and not the head logic. Dive down to BehaviorSpec level

- Adding a test that fanout does not happen automatically feels weird, but is somewhat possible by waiting for 1M secs in io-sim

- When doing some business in BehaviorSpec I realized

waitForwas misleading as it was only waiting for a second. I ended up removing it and keeping thewaitUntilfunctions with veryLong (TM) timeouts to still get nice error locations. - Why are we using multiples of clusterSize in waiting on the bench?

-

Updating cardano-node + deps to vasil testnet tagged version. Goal: connect to and open/close heads on the vasil testnet

-

Only some minor additional changes to

debugPlutusand thehydra-nodecompiles. Let's try to connect to the testnet. If it fails somehow, probably worthwile to update test suites and check them first. -

Using setup instructions from Sam / environments from new cardano-world repo: https://github.com/input-output-hk/cardano-world/tree/master/docs/environments/vasil-qa

-

When usign the 'rev = vasil-testnet-v1' for the cardano-node in our shell.nix, I get a too old cardano-node which cannot parse the PlutusV2 cost model?

- Using the commit SHA as rev it works.. weird.

-

Need the

hydra-tuito do evaluation of vasil-testnet. So let's see how we can get that compiled.- Making it not depend on

hydra-clusterby factoringCardanoClient: https://github.com/input-output-hk/hydra-poc/pull/360

- Making it not depend on

-

Updating

hydra-test-utilsand other test packages on the side between meetings. We are still directly using cardano-ledger in hydra-test-utils!- When updating

Hydra.Chain.Direct.FixtureI merged many things with Hydra.Ledger.Cardano.Evaluate defaults - Generating blocks at some given SlotNo is a bit tricky as we now use Praos, not TPraos

- When updating

-

Setting up some instructions on how to connect to the testnet and focus on the main goal again

-

Initializing a Head works on vasil testnet! 🎉

- Init tx id: 65b8d0a9a325e8e54c5dea0f9b4a26dacb429959290f6d2914fb824f2db8e8a1

-

Commit/abort fails with

NonOutputSupplimentaryDatums-> Maybe because of inline datums vs. datum witnesses?- Suspect: Including datum witnesses when the input has an inline datum is not liked by the ledger.

- After reverting back to

TxOutDatumInTxcommit and open works 🎉

-

After succesfully opening a head on vasil-testnet, I realized the protocol parameters include fees and our TUI is not accounting for them. So I updated the protocol parameters to 0 fees. When starting the hydra-node again, I see closed/fanout transactions being observed & tried? I did never close the head (maybe accidentially because of Ctrl-c / not hitting Ctrl correctly?). Anyway. Now the hydra-node sees a closed head.

-

They hydra node is now trying to post a fanout tx, which fails to validate because of

fannedOutUtxoHash /= closedUtxoHash. -

On a side note: when replaying the chain, the hydra-node tries to post a collect and errors with

InvalidStateToPost {txTried = CollectComTx.. -

The hydra-tui then also fails to decode the error message because some

$.postTxError.tx.witnesses.scripts.f2dc3a3b50082d1fb34e250be1bf93bb684aa552a956b8e16e33a9aa: DecoderErrorDeserialiseFailure \"Script\" (DeserialiseFailure 0 \"expected list len or indef\").. seems to be coming from "inside" the failed tx decoding- Indeed the scripts field looks odd, it's an identical key/value object

"scripts": { "f2dc3a3b50082d1fb34e250be1bf93bb684aa552a956b8e16e33a9aa": "f2dc3a3b50082d1fb34e250be1bf93bb684aa552a956b8e16e33a9aa", "f48c11e7b724932eccc5685b16ee1181bae3030e0bb9b4e820ce1e1c": "f48c11e7b724932eccc5685b16ee1181bae3030e0bb9b4e820ce1e1c" }- This is likely our json instance. Need to look into this. Probably good point to get

babbage-previewback into green test territory first.

AB Solo - #359

Trying to get back on track with this time tracking in the HeadLogic, seems like we left a failing test for conversion property and few other issues in the code

- Added some coverage measurement to tests for contestation period, struggling to get the tests to terminate when adding

checkCoverage. Even lowering the Confidence values does not help

Got an error in this test: Seems like we don't correctly extract the time?

- The test actually fails for a good reason! We have a "fake"

remainingContestationPeriodvalue set to 0 when observing the close tx. - We have a special case for observing the

OnCloseTxwhereby we always set theremainingContestationPeriodto 0 because we would need to have current time to compute it, and this done in the calling code. Adjusted the relevantStateSpectest to deal with that special case too.

ETE tests are still failing, we fail to observe the fanout tx in time, probably because it's rejected

Replacing the use of DiffTime with NominalDiffTime everywhere: According to the time package docs DiffTime is less commonly needed than NominalDiffTime.

When one computes the difference of 2 UTCTime one gets a NominalDiffTime and not a DiffTime. The latter requires handling of leap seconds which requires some table...

Got a failure in DirectSpec which stems from the fact I changed the conversion to/from ContestationPeriod and there wasn't any test checking that! -> add a property to ensure we can roundtrip

tracing observed TX in DirectChainSpec gives me this :

Observation (OnCloseTx {snapshotNumber = 1, remainingContestationPeriod = -1653315436482929.887860059s})

Looks like computation of remainingContestationPeriod is wrong... 🤦

There was no less than 2 more errors:

- In the

posixToUTCTimefunction, I multiplied by 1000 instead of dividing by 1000 - in

DirectI subtracted in the wrong direction to compute the remaining time

ETE tests are still failing, the Fanout tx fails to be posted because it's lower bound is too low -> adding some buffer to the remainingContestationPeriod and tweaking wait time in test to account for close grace period

Now all green 🎉

- *date: 2022-05-16 → 2022-05-19

- *event link: https://iogmeetups2022.co.uk/

Several representatives from auditing companies: Runtime Verification, FYEO, Certik, Tweag. Key takeaways:

- Runtime verification also offers audits of projects' tokenomics and layer 2 protocol designs.

- FYEO seemed keen on agile, with auditors embedded as part of the development team. Seems like a good fit for teams that needs guidance.

- Tweag presented two of their open-source tools: cooked-validators and pirouette.

- Certik provides Haskell-to-Coq (somewhat automated) translations and then works on proofs of parts of the code through Coq.

Side presentation from another Tweag guy working on a separate project called Hachi which aims to provide some automated contract vulnerabilities discovery. Seemed somewhat similar to snyk.

Both ADAX and SundaeSwap told their stories about being audited. Both felt value and were positive about the process. ADAX mentioned they'd love to see more IDE support and automated testing tools in the Haskell/Plutus ecosystem. SundaeSwap stressed that getting an audit early on in the process was a tremendous help/success factor to them.

Quviq presented property testing and in particular contract-model testing. They showcased their latest tools which are built on top of the Plutus emulator. The presentation was more or less a live presentation of a guide they've recently published. Unclear whether the underlying tools are already available or still under development. They also mentioned how they have crafted a few generic properties that apply to most contracts (e.g. 'no funds remain locked') and how they now have tooling around to test that more or less automatically for any project. Overall, QuviQ's approach helps get coverage of the visible API surface of a contract but does not help much when it comes to pen-testing adversarial behaviours.

Had a few good chats with various folks, but in particular:

-

With Max (Quviq) regarding contract model-testing and the work they're doing for Hydra. Hydra is indeed not using the PAB, and thus not relying on the contract monad & simulator so we've been working with them to adapt their current tooling to work also with setups like Hydra. Seemed like they were pretty close to being able to tie the knot and show us something.

-

With Duncan (IOG), discussed input endorsers and how the way the design is headed should not much impact existing client applications which could still digest the chain by looking at the consensus block only. Possibly, existing interfaces (i.e. chain-sync mini-protocol) could be adapted in a way that they abstract away the indirections between transaction blocks and consensus blocks coming with Ouroboros Leios / input endorsers. We also discussed the pros and cons of the Cardano-api vs the ledger api.

-

With Pi (SundaeSwap) about ideas for Hydra and how SundaeSwap could work on Hydra with a few additions/modifications. Namely, the ideas of "fantom tokens" and "constrained hydra heads". We also discussed an idea of a "mainnet on-demand short-lived simulations" as a potential tool/project to help people build trust from interacting with dapps. The idea would be to have a node which replicates the mainnet traffic but also allows the execution of arbitrary transactions on that parallel network as if they were executed on the mainnet. So, a kind of testnet but fueled with actual mainnet traffic.

ETE test failure on fanout with contestation deadline. There's clearly something wrong in the way we compute time from slot, two different slots give the same POSIXTime:

fromPostChainTx: (SlotNo 216,POSIXTime {getPOSIXTime = 1000000000000})

fromPostChainTx: (SlotNo 222,POSIXTime {getPOSIXTime = 1000000000000})

Tracing the execution of queryTimeHandle to understand on which basis the time is derived from slotNo. Perhaps there is a miscalculation on-chain when translating slot to POSIXTime?

Problem is that:

- we pass a wrong time to the close function

- thus the contestation dealine is wrong and too much in the future

- when the fanout tx is checked, the validity lower bound is always below contestation deadline.

The contestation deadline is definitely wrong:

head state: Closed {parties = ["gZ\EM\"[\206Y\RSN-{\195\241m\192\242\161\132*\142\221\v\172\DC1\208{\149\243q\245u\188","W\169\255\&2yF\210J\SYN\157\206\168\194\194=\243l\215q*\247\248$\US\150h\"\229\234\186\&4\f","2\132L\DEL\222\132\151Z\226\128\CAN\235\244}`\250\229}\165Y:\244*cy\SYN\171\&1$y\142\201"], snapshotNumber = 1, utxoHash = "\ACK\192@\DC1#\141\249'}{\160\194;\246\146\170n\254\134\155\163\175\204\160~gP\131\133\139f\227", contestationDeadline = POSIXTime {getPOSIXTime = 1000000010000}}

So it seems the internal relative time computation from EpochInfo is correct:

rel time: RelativeTime 19.5s, slotno: (SlotNo 195,POSIXTime {getPOSIXTime = 1000000000000})

and computing directly epochInfoSlotToUTCTime works fine.

translateTimeForPlutusScripts checks the version is greater than 5 but our protocolVersion in cardano-node.json is (0,0) -> Changing to protocol version 6 shows time is changing

- Posting FanoutTx still fails -> Are we waiting long enough before trying to post it?

- Doubling the wait time in the

HeadLogicmakes the test pass 🎉

We are "racing" against the chain, so we should probably find a way to observe the passing of time from the chain to head logic.

- We should request from Plutus team to include

SlotNoas validity boudns in theScriptContext-> All computations would be done in slots thus removing the need to translate back and forth - If we observe time in the

HeadLogicit should be expressed inDiffTimerather than abstract slots, adding like aTick DiffTimetoOnChainEvent?

We need to keep the wait time before posting the fanout tx in sync with the gracePeriod from Direct which shifts the start of the deadline by 100 slots, but time is handled using DiffTime in HeadLogic hence this is really dependent on the slotLength parameter of the underlying chain.

It's hard to define grace periods that work for chains with different slotLength, but changing our slotLength in tests to 1 to be consistent with testnet and mainnet makes it very slow and does not really fix the problem.

ETE test passes with some fixed constant time added to wait but we should discuss a better solution.

Trying to implement a Contest mutation that requires checking the Tx validity interval against the contestationDeadline.

- Problem is to generate a validity upper bound in slots that is guaranteed to be greater than the contestationDeadline in milliseconds.

- The way we compute contestationDeadline on-chain for verification purpose is incorrect. How do I write a failing test?

Completed work on Contest transactions and handling of contestation deadline for Close and Contest logic -> #356.

Now tackling #357: The Fanout should only be posted and valid after the contestation deadline.

Added a lower bound on Fanout tx validity, but the problem is that now it fails to be posted in our ETE tests because we don't wait enough -> increasing the wait time in ETE tests.

- But even if we wait enough, we'll need to handle resubmission of the tx because we are adjusting the lower bound by some factor.

- Perhaps we can get away with it right now by removing grace period from the fanout transaction and wait long enough?

ETE tests are now failing because of fanout tx being posted too soon:

uncaught exception: PostTxError

CannotCoverFees {walletUTxO = fromList [(TxIn "d048d456e9635308eb97d9ae719e2c279f4931963b4d6088506317524ffa3a46" (TxIx 1),TxOut (AddressInEra (ShelleyAddressInEra ShelleyBasedEraAlonzo) (ShelleyAddress Testnet (KeyHashObj (KeyHash "f8a68cd18e59a6ace848155a0e967af64f4d00cf8acee8adc95a6b0d")) StakeRefNull)) (TxOutValue MultiAssetInAlonzoEra (valueFromList [(AdaAssetId,80500000)])) (TxOutDatumHash ScriptDataInAlonzoEra "a654fb60d21c1fed48db2c320aa6df9737ec0204c0ba53b9b94a09fb40e757f3"))], headUTxO = fromList [(TxIn "d048d456e9635308eb97d9ae719e2c279f4931963b4d6088506317524ffa3a46" (TxIx 0),TxOut (AddressInEra (ShelleyAddressInEra ShelleyBasedEraAlonzo) (ShelleyAddress Testnet (ScriptHashObj (ScriptHash "5703a5ce6ba53c760b93e0d90816f8cb5b41e23d8da2d2a3c8a7a257")) StakeRefNull)) (TxOutValue MultiAssetInAlonzoEra (valueFromList [(AdaAssetId,5000000),(AssetId "417dcfd87cbcc10b0f2dceac9ec6e54f1f36cc28b3845c5c17491b99" "HydraHeadV1",1),(AssetId "417dcfd87cbcc10b0f2dceac9ec6e54f1f36cc28b3845c5c17491b99" "\248\166\140\209\142Y\166\172\232H\NAKZ\SO\150z\246OM\NUL\207\138\206\232\173\201Zk\r",1)])) (TxOutDatumHash ScriptDataInAlonzoEra "61a28685e734137a0d156dc1093de8613bc822d6a60c2edaa8378fe302460b06"))], reason = "ErrScriptExecutionFailed (RdmrPtr Spend 0,ValidationFailedV1 (CekError An error has occurred: User error:\nThe provided Plutus code called 'error'.) [\"lower bound validity before contestation deadline\",\"PT5\"])", tx = ShelleyTx ShelleyBasedEraAlonzo (ValidatedTx {body = TxBodyConstr TxBodyRaw {_inputs = fromList [TxInCompact (TxId {_unTxId = SafeHash "d048d456e9635308eb97d9ae719e2c279f4931963b4d6088506317524ffa3a46"}) 0], _collateral = fromList [], _outputs = StrictSeq {fromStrict = fromList [(Addr Testnet (KeyHashObj (KeyHash "f8a68cd18e59a6ace848155a0e967af64f4d00cf8acee8adc95a6b0d")) StakeRefNull,Value 1000000 (fromList []),SNothing)]}, _certs = StrictSeq {fromStrict = fromList []}, _wdrls = Wdrl {unWdrl = fromList []}, _txfee = Coin 0, _vldt = ValidityInterval {invalidBefore = SJust (SlotNo 51), invalidHereafter = SNothing}, _update = SNothing, _reqSignerHashes = fromList [], _mint = Value 0 (fromList [(PolicyID {policyID = ScriptHash "417dcfd87cbcc10b0f2dceac9ec6e54f1f36cc28b3845c5c17491b99"},fromList [("HydraHeadV1",-1),("\248\166\140\209\142Y\166\172\232H\NAKZ\SO\150z\246OM\NUL\207\138\206\232\173\201Zk\r",-1)])]), _scriptIntegrityHash = SJust (SafeHash "bc91a43e1495688a05260b969357c57d11b6f83a082b451e901f107840c0ad9e"), _adHash = SNothing, _txnetworkid = SNothing}, wits = TxWitnessRaw {_txwitsVKey = fromList [], _txwitsBoot = fromList [], _txscripts = fromList [(ScriptHash "417dcfd87cbcc10b0f2dceac9ec6e54f1f36cc28b3845c5c17491b99",PlutusScript PlutusV1 ScriptHash "417dcfd87cbcc10b0f2dceac9ec6e54f1f36cc28b3845c5c17491b99"),(ScriptHash "5703a5ce6ba53c760b93e0d90816f8cb5b41e23d8da2d2a3c8a7a257",PlutusScript PlutusV1 ScriptHash "5703a5ce6ba53c760b93e0d90816f8cb5b41e23d8da2d2a3c8a7a257")], _txdats = TxDatsRaw (fromList [(SafeHash "61a28685e734137a0d156dc1093de8613bc822d6a60c2edaa8378fe302460b06",DataConstr Constr 2 [List [B "8\b\142L*\232/\\E\198\128\138a\166I\r<a,\225\218#W\DC4Fo\199H\251\196\203\187"],I 1,B ",\"\220s\159\251H\249\221M\174\249l\NUL\166\182j\186\144\195\&7F\133\&4Fm9\245HrX\192",I 1000000100000])]), _txrdmrs = RedeemersRaw (fromList [(RdmrPtr Spend 0,(DataConstr Constr 4 [I 1],WrapExUnits {unWrapExUnits = ExUnits' {exUnitsMem' = 0, exUnitsSteps' = 0}})),(RdmrPtr Mint 0,(DataConstr Constr 1 [],WrapExUnits {unWrapExUnits = ExUnits' {exUnitsMem' = 0, exUnitsSteps' = 0}}))])}, isValid = IsValid True, auxiliaryData = SNothing})}

Trying to augment the time we wait within the HeadLogic and increase accordingly the time waiting in the ETE test. The node's log ends exactly when the direct chain tries to post them, or rather when the Direct chain component enqueues the transaction for posting, which seems odd, as if the nodes were blocked there:

{"message":{"tag":"Node","node":{"tag":"ProcessingEvent","event":{"tag":"ShouldPostFanout"},"by":{"vkey":"675a19225bce591e4e2d7bc3f16dc0f2a1842a8edd0bac11d07b95f371f575bc"}}},"threadId":35,"timestamp":"2022-05-18T16:31:38.815438965Z","namespace":"HydraNode-3"}

{"message":{"tag":"Node","node":{"tag":"ProcessingEffect","effect":{"tag":"OnChainEffect","onChainTx":{"tag":"FanoutTx","utxo":{"d93a887ada43ba4f5ddc39ccbcdda95ed8e2558e0b960edb87190f8e7a7190bd#1":{"address":"addr_test1vzfjrvg8w3wcqsr0s7t9xu9csz9t9g520yfugkwl8lyh2ys2pjz8a","value":{"lovelace":5000000}},"56602b5268991a51c16d93e3a3f23e8288b07f19c19c63e57189d39156609956#0":{"address":"addr_test1vzfjrvg8w3wcqsr0s7t9xu9csz9t9g520yfugkwl8lyh2ys2pjz8a","value":{"lovelace":1000000}},"56602b5268991a51c16d93e3a3f23e8288b07f19c19c63e57189d39156609956#1":{"address":"addr_test1vpemzng7e5nvp2ynwpstydvkdrsevmhwtswxa8zt0dda2rcrwkrvp","value":{"lovelace":19000000}}}}},"by":{"vkey":"675a19225bce591e4e2d7bc3f16dc0f2a1842a8edd0bac11d07b95f371f575bc"}}},"threadId":35,"timestamp":"2022-05-18T16:31:38.815444838Z","namespace":"HydraNode-3"}

{"message":{"tag":"DirectChain","directChain":{"tag":"ToPost","toPost":{"tag":"FanoutTx","utxo":{"d93a887ada43ba4f5ddc39ccbcdda95ed8e2558e0b960edb87190f8e7a7190bd#1":{"address":"addr_test1vzfjrvg8w3wcqsr0s7t9xu9csz9t9g520yfugkwl8lyh2ys2pjz8a","value":{"lovelace":5000000}},"56602b5268991a51c16d93e3a3f23e8288b07f19c19c63e57189d39156609956#0":{"address":"addr_test1vzfjrvg8w3wcqsr0s7t9xu9csz9t9g520yfugkwl8lyh2ys2pjz8a","value":{"lovelace":1000000}},"56602b5268991a51c16d93e3a3f23e8288b07f19c19c63e57189d39156609956#1":{"address":"addr_test1vpemzng7e5nvp2ynwpstydvkdrsevmhwtswxa8zt0dda2rcrwkrvp","value":{"lovelace":19000000}}}}}},"threadId":35,"timestamp":"2022-05-18T16:31:38.815447104Z","namespace":"HydraNode-3"}

Yet, the transactions from each node are correctly constructed, however the time we compute does not make sense:

fromPostChainTx: (SlotNo 726,POSIXTime {getPOSIXTime = 1000000000000}), tx: FanoutTx {utxo = fromList [(TxIn "14d3799365e384ed57cac9392521c0c78f8c62195c707ddbdb9236582556d5f2" (TxIx 1),TxOut (AddressInEra (ShelleyAddressInEra ShelleyBasedEraAlonzo) (ShelleyAddress Testnet (KeyHashObj (KeyHash "764c16ddcf7f559225399184098ee132c33eca4c80d1def5fe0beb23")) StakeRefNull)) (TxOutValue MultiAssetInAlonzoEra (valueFromList [(AdaAssetId,5000000)])) TxOutDatumNone),(TxIn "7c655025efb8e089b14672a7085594c91e3cb56b3b549fd798bcc1c8ee60dfb8" (TxIx 0),TxOut (AddressInEra (ShelleyAddressInEra ShelleyBasedEraAlonzo) (ShelleyAddress Testnet (KeyHashObj (KeyHash "764c16ddcf7f559225399184098ee132c33eca4c80d1def5fe0beb23")) StakeRefNull)) (TxOutValue MultiAssetInAlonzoEra (valueFromList [(AdaAssetId,1000000)])) TxOutDatumNone),(TxIn "7c655025efb8e089b14672a7085594c91e3cb56b3b549fd798bcc1c8ee60dfb8" (TxIx 1),TxOut (AddressInEra (ShelleyAddressInEra ShelleyBasedEraAlonzo) (ShelleyAddress Testnet (KeyHashObj (KeyHash "f27ab2a3f2ac48c0727c4fc2982e41caedbc27d5356427d7b86cfb50")) StakeRefNull)) (TxOutValue MultiAssetInAlonzoEra (valueFromList [(AdaAssetId,19000000)])) TxOutDatumNone)]}

Running hoogle server --local turns all file:// links into http:// links which makes it straightforward to browse remotely!

Working on contestation period:

- replace

closedAtstate field with acontestationDeadlinefield taking into account the contestation period - check Contest transaction respects contestation period

Migrating code from State to stop using tuples for storing on-chain state information and start using proper records

- Annoyingly it's not possible to have GADT fields with same name because the constructores have different types

Passed contestationPeriod to Open state on-chain, and also stored it in the ThreadOutputXXX so that it's available all along the chain of transactions as we need it to check the contest transactions' time against the deadline

Introduce a MutateCloseContestationDeadline mutation for the Close transactions to ensure the computation is done correctly, but it "fails" to kill the mutant

ContestationPeriod is an integer representing a number of picoseconds, which can be translated to a DiffTime. We probably don't need that resolution level and should stick to just a number of seconds -> Change to use Plutus' DiffTimeMillis.

- I had an interesting meeting with David Arnold about his proposed approach to DevOps based on nix

- He showed us what he've done, a quick and dirty experiment, to pack a cardano node into the proposed 4 layers:

- source & binary packages, expressed using nix dependencies down to the lowest possible level (eg. glibc)

- entrypoints defining how to run the software, what configuration is needed, what runtime dependencies are required

- OCI image packaging which relies on entrypoints to produce a "runnable" thing

- scheduler which describes the execution infrastructure

- We plan to schedule a working session to explore how this approach could apply to Mithril

- After switching to inline datums, I forgot to update the minting policy & validators

- After upgrading initial validator to PlutusV2 +

serialiseData, I also needed to update the off-chain code creating theSerializedTxOut. Important here is to use thePlutus.TxOuttype and its correct binary format. - Off-chain code for

observeCommitTxis now failing because it can't deserialize theSerializedTxOut. This now needs afromPlutusTxOutand we could use some better functions on the usage side of things (tighten up the assumptions aroundSerializedTxOut). - Due to the lack of a

FromCBOR Plutus.Datainstance, I switched toSerialise Plutus.Datawhich goes bothways- Where was the

ToCBOR Plutus.Datainstance coming from?

- Where was the

-

hashPreserializedCommitsseems not to do the right thing now.. off-chain code does not produce the expected/same hash in collectcom. - Implementing

fromPlutusTxOutis a bit a churn.. not sure if I should do it now. The current version is good enough for tx-cost evaluation and we only need to fix this when we want to properly observe commits off-chain.- Nope.. we need it to construct abort transactions :/

- I realize we can (and should) define only a single

hashTxOutsfunction to be used off- and on-chain. - When implementing

fromPlutusAddressI realize that theNetworkIdis lost from Ledger -> Plutus and we would have no way to retrieve it back. In the past we have been serializing to cardano-api/ledger format, which includes these and observing that back.

- Finish basic

checkContest - Handle contestation period -> on-chain

- Also handling of failure propagation from Direct

- Need to handle the case of ContestTx failing and resubmission

- BehaviorSpec does not model tx failure -> Add logic to simulated chain? or craft scenario by hand

- Ask researcher about contesting only once => probably not a security risk as we guarantee monotically increasing snapshot number

Reviewing #349 to check we have covered all basic cases for contest contract:

- Notice there's duplication in the

Closemutations probably causaed by wrongly resolved merge conflicts - Most mutations are not interesting, the only one we are interested in is the

MutateParties

Contestation period #351

- Store the time/slot at which close happens on-chain in the datum + add it as time interval

- when constructing the tx, read the current slot + add a buffer as upper bound for validity

- bounds are checked at level 1 validation + contract validation to check consistency of datum

- Contest tx needs to be within closed datum slot + contestation period

- Fanout tx needs to be after closed datum slot

We introduce mutations for changing the validity range of the transaction which leads to some changes in the hydra-cardano-api:

- Trying to use

TxBodypattern to easily deconstruct the validity interval but we realise it's unidirectional! => need to work with ledger API - We realise we need to handle

POSIXTime-based interval on-chain but it seems plutus V1's time management is broken

We add closedAt field to the Closed on-chain state in order to record the moment in time the closed happen, to be able to check the contestation period

- Fixing code everywhere following introduction of

closedAtin the on-chain state - The function to construct a

POSIXTimeis somewhat involved but we should have everything available in theDirectcomponent.

- Error calls in

makeShelleyTransactionBodyfor BabbageEra.- Can try to fill in the gaps with what we get from cardano-ledger.

- Seems like jordan has done so upstream already, nice.

- None of the

tx-costtransaction validates.. let's start debugging withinitTx.- The minting script of initTx fails with:

(ValidationFailedV2 (CekError An error has occurred: User error:

The provided Plutus code called 'error'.

Caused by: (force headList [])) [])

- This seems to be a pretty print of an

ErrorWithCause -

force headList []may be plutus-core forhead [] - Trying to work it backwards with a

const Trueminting policy - Same error, this suggests something is wrong in the invocation of the plutus code and not "in our code"

- not invoking

wrapMonetaryPolicyand define our script to beconst ()-> works! - Using explicit

fromBuiltinDatato debug decoding errors (this is a trap.. see further below)

- Yeah.. forgot to switch to PlutusV2 ScriptContext.. this error was not very descriptive :/

- Added some traces when converting to untyped validators / minting policies (this is a trap, neatly implemented .. see further below)

- Get a somewhat realistic limit of initTx now. Need to backport the full evaluation of initTx (incl. execution budgets) + all redeemers (54e9e007a1673a70bf8cbb698bce3f9b7834de9e)

- Next: cost of commit fails when generating the starting state in

unsafeObserveTx- Likely

observeInitTxjust returned Nothing, let's trace it - Seems like cardano-api reports only a TxOutDatumHash and not a TxOutDatumInTx in observeInitTx.. even though we do create the transaction with TxOutDatumInTx

- This seems to be the culprit: https://github.com/input-output-hk/cardano-node/blob/a1e947e6e281f1b3739d34c74356f1b93ecc1d50/cardano-api/src/Cardano/Api/TxBody.hs#L2241-L2252 -> TxOutDatums are not resolved in babbage era?

- Seeing the code it might just work if we pivot to using inline datums. I also asked the node-api team about it, but let's try..

- Seems like

ReferenceTxInsScriptsInlineDatumsInBabbageErais also not re-exported properly - After using

TxOutDatumInline, observation seems to work again

- Seems like

- Likely

- Initial and Commit validators need migration to V2, let's try to validate a close transaction first

- Getting closer.. now

serialiseDataseems to be "forbidden". Maybe missing a cost model?

ValidationFailedV2 (CodecError (DeserialiseFailure 8861 "BadEncoding (0x00000042000452ad,S {currPtr = 0x000000420004309c, usedBits = 6}) \"Forbidden builtin function: (builtin serialiseData)\"")) []))]

- serialiseData is the only builtin requiring protocol version 6 -> let's update it in evaluateTx / our fixtures

- Working to get fanout validate in tx-cost:

hashTxOutsis not consistent off-/on-chain.- Debugging by vendoring the

prop_consistentOnAndOffChainHashOfTxOutsinto tx-cost

- Debugging by vendoring the

- After aligning the hashTxOuts it turns out that the "new" fanout transaction is WAY more expensive. Revert only encoding to non-serialiseData to double check

- Okay.. the cost is as high with plutus-cbor, verified by using

serialiseTxOutsinstad ofserialiseData . toBuiltinData - For some reason

fromBuiltinDatais much more expensive thanunsafeFromBuiltinDatain wrapValidator -> how expensive isunsafeFromBuiltinDatathen? We maybe should use partly "from-data-decoded" script contexts?

- Okay.. the cost is as high with plutus-cbor, verified by using

- When fixing arbitrary instances, I realize there are hedgehog generators in

cardano-apiwhich we could start using?- One note though: these generators are fixed range, e.g. 0-10 assets in a txout

- No

cardano-ledgergenerators for "traces" of transactions, only arbitrary ones from serialization- Need to disable

genFixedSizeSequenceOfValidTransactionsfor now -> it's a spike after all

- Need to disable

- The fact that

Hydra.Chain.Direct.Walletusescardano-ledgertypes directly is a major PITA - Predicate failure wrapping is getting out of hand with babbage "inheriting" alonzo errors

- it's now a pattern match on

UtxowFailure (FromAlonzoUtxowFail (WrappedShelleyEraFailure (UtxoFailure (FromAlonzoUtxoFail (UtxosFailure (ValidationTagMismatch _ (FailedUnexpectedly (PlutusFailure plutusFailure debug :| _))))))))

- it's now a pattern match on

- Some last remaining consensus wrangling in

Hydra.Chain.Direct.. now the tx-cost should compile. - After fixing everything it seems like

cardano-apiis not ready yet to handle babbage scripts -> I get this error whencabal run tx-cost:cabal run tx-cost Up to date tx-cost: TODO: Babbage scripts - depends on consensus exposing a babbage era CallStack (from HasCallStack): error, called at src/Cardano/Api/TxBody.hs:3300:10 in cardano-api-1.33.0-6a6e2f7c2ab86f979fe10873d25f3d5dd6b48d29a51d0a0c31aaa30d1adbdd8c:Cardano.Api.TxBody