- Add "format string" support for tensors (#655)

- Explicitly allow

openArrayin Tensor[],[]=, fix the CI (#666) - Update arraymancer dependencies (#665)

- Add support for running some key

bitopsfunctions on integer Tensors (#661) - Make the output of

ismemberhave the same shape as its first input (#657) - Non-contiguous tensor iteration optimization (#659)

- update nimcuda dependency to v0.2.0

- minor regression fix for

toTensortakingSomeSetwhich in some cases could lead to the Nim compiler picking the wrong overload - add Division gate for autograd engine (#583 by @jegork)

- provide a better fix for

appendissue #637 (#654)

- make

[],[]=more 'type safe', by producing better error messages when compile time errors are expected (instead of relying on CT errors viaindex_selectand friends - add ability to assign multiple values to multiple indices in

[]=, i.e.t[[0, 2]] = [5, 1]

- Improve the performance of convolve (and correlate) (#650)

- Add versions of

toTensorthat take a type as their second argument (#652) - More missing numpy and matlab (#649)

- Add

tileandrepeat_valuesprocedures (#648)

- Add

reshape_inferprocedure (PR #646) - Missing special matrices (PR #647)

- properly gensym shim templates in p_accessors (PR #642)

- Fix "imported and not used: 'datatypes' [UnusedImport]" warning (PR #644)

- reinsert

KnownSupportsCopyMemingemmfor docgen (PR #641) - Fix issue 639 (PR #640)

- Add a few more items to .gitignore (PR #636)

- Some warning removals (PR #635)

- Fix issue PR #637 (crash when using

appendon an empty tensor) (PR #638) - More complex tensor features (PR #633)

- Add additional documentation to some of the ufuncs (PR #632)

- Relax some shape checks for rank-1 tensors (PR #625)

- improve docstrings of

makeUniversaltemplates, allow extensions (PR #631) - Complex enhancements (PR #630)

- Support complex64 tensor - real scalar operations (like numpy does) (PR #629)

- Add int2bit functions (PR #628)

- Support appending one or more individual values (PR #627)

- Add support for initializing all false, all true and random bool tensors (PR #626)

- drops support for Nim v1.4. Many things are likely to still work, but the CI tests for 1.4 have been removed.

- add

Tensor.lenas an alias forTensor.sizereturning an int (#623 by @AngelEzquerra) - More missing numpy functions (#622 by @AngelEzquerra)

- Missing numpy functions (#619 by @AngelEzquerra)

- Missing math (#610 by @AngelEzquerra)

- Add support for doing a masked fill from a tensor (#612 by @AngelEzquerra)

- Add correlate 1D implementation (#618 by @AngelEzquerra)

- Add

at_muttemplate to enable masked_fill on slices (#613 by @AngelEzquerra) - Add 1D convolution (#617 by @AngelEzquerra)

- Add bounds checks for fold and reduce axis inline operations (#608 by @AngelEzquerra)

- Doc improvements (#609 by @AngelEzquerra)

- Improve the error message when trying to assign to a slice of an immutable tensor (#611 by @AngelEzquerra)

- fix issue #606 about

arangewithin a[]expression (#607 by @AngelEzquerra)

- fix doc generation (PR #602)

- fix behavior of

squeezefor 1 element tensors. Now correctly returns a rank 1 tensor instead of a broken rank 0 tensor (PR #600) - massively improve support for

Complextensors (PR #599 by @AngelEzquerra) - implement span slices with negative steps to reverse tensors (PR #598 by @AngelEzquerra)

- add procedures to create and handle (anti-)diagonal matrices (PR #597 by @AngelEzquerra)

- add

itemfunction to get element of a single element tensor, similar toPyTorch / Flambeau'sitem(PR #600 by @AngelEzquerra)

- clean up some warnings and hints (PR #595)

- update

nimblasandnimlapackdeps tov0.3.0for smarter detection of these shared libraries

- add support for Complex tensors for the

decompositionprocedures (PR #594) - add support for Complex tensors for the common error functions (PR #594)

- add new submodule

linear_algebra/algebra.nimwithpinvto compute the pseudo inverse of a given matrix (SomeFloatandComplex) (PR #594)

- change a few more wrong usages of

newSeqUninitbynewSeq(PR #593) - two minor

nim docrelated fixes

- remove custom

newSeqUninitif stdlib version is available after nim-lang/Nim#22739 (PRs #591, #592)

- performance improvements to the k-d tree implementation by avoiding

powandsqrtcalls if unnecessary and providing a custom code path for euclidean distances - fix an issue in

kdesuch that theadjustargument actually takes effect

- use

system.newSeqUninitif available (PR #589) - add support to load Fashion MNIST dataset (PR #590)

- update

std/mathpath (PR #588) - update VM images (PR #588)

- change the signature of

numerical_gradientfor scalars to explicitly rejectTensorarguments. See discussion in PR #580.

- import

mathin one test to avoid regression due to upstream change, PR #580

- change

KnownSupportsCopyMemto aconceptto allow usage ofsupportsCopyMeminstead of a fixed list of supported types - remove the workaround in

toTensoras parts of it triggered an upstream ARC bug in certain code. The solution gets rid of the existing workaround and replaces it by a saner solution.

- replace usages of

varargsbyopenArraythanks to upstream fixes (PR #572) - remove usages of dataArray (PRs #569 and #570)

- fix issue displaying some 2D tensors (PR #567)

- add

cloneoperation for k-d tree (PR #565) - remove

TensorHelpertype in k-d tree implementation, insteadbinda custom<(PR #565) - fix

deepCopyfor tensors (PR #565) - misc fixes for current devel with ORC (PR #573)

- replace badly defined

containscheck of integer inset[uint16](PR #575) - unify all usages of identifiers with different capitalizations to

remove many compile time hints / warnings and add

styleCheck:usagestonim.cfg(PR #564)

- replace explicit

optimizer*procedures by genericoptimizerwith a typed first argumentoptimizer(SGD, ...),optimizer(Adam, ...)etc. (PR #557)

- replace

shallowCopybymoveunder ARC/ORC (PR #562)

- rewrote neural network DSL to support custom non-macro layers and composition of multiple models (PR #548)

- syntactic changes to the neural network DSL (PR #548)

- autograd context is no longer specified at network definition. It is only used when instantiating a model. E.g.:

network FizzBuzzNet: ...instead ofnetwork ctx, FizzBuzzNet: ... - there is no

Inputlayer anymore. All input variables are specified at the beginning of theforwarddeclaration - in/output-shapes of layers are generally described as

seq[int]now, but this depends on the concrete layer type - layer descriptions behave like functions, so function parameters can be specifically named and potentially reordered or omitted. E.g.:

Conv2D(@[1, 28, 28], out_channels = 20, kernel_size = (5, 5)) - the

Conv2Dlayer expects the kernel size as aSize2D(integer tuple of size 2), instead of passing height and width as separate arguments - when using an

out_shapefunction of a previous layer to describe aLinearlayer, one has to useout_shape[0]for the number of input features. E.g.:Linear(fl.out_shape[0], 500)instead ofLinear(fl.out_shape, 500) GRUhas been replaced byGRULayer, which has now a different description signature:(num_input_features, hidden_size, stacked_layers)instead of([seq_len, Batch, num_input_features], hidden_size, stacked_layers)

- autograd context is no longer specified at network definition. It is only used when instantiating a model. E.g.:

- Remove cursor annotation for CPU Tensor again as it causes memory leaks (PR #555)

- disable OpenMP in reducing contexts if ARC/ORC is used as it leads to segfaults (PR #555)

- Add cursor annotation for CPU Tensor fixing #535 (PR #533)

- Add

toFlatSeq(flatten data and export it as a seq) andtoSeq1Dwhich export a rank-1 Tensor into aseq(PR #533) - add test for

syevr(PR #552) - introduce CI for Nim 1.6 and switch to Github Action task for Nim binaries (PR #551)

- fixes

einsumin more general generic contexts by replacing the logic introduced in PR #539 by a rewrite from typed AST tountyped. This makes it work in (all?) generic contexts, PR #545 - add element wise exponentiation

^.for scalar base to all elements of a tensor, i.e.2^.tfor a tensort, thanks to @asnt PR #546.

- fixes

einsumin generic contexts by allowingnnkCallandnnkOpenSymChoice, PR #539 - add test for

einsumshowing cross product, PR #538 - add test for display of uninitialized tensor, PR #540

- allow

CustomMetricas user defined metric indistances, PR #541. User must provide their owndistanceprocedure for the metric with signature:proc distance*(metric: typedesc[CustomMetric], v, w: Tensor[float]): float

- disable AVX512 support by default. Add the

-d:avx512compilation flag to activate it. Note: this activates it for all CPUs as it hands-mavx512dqto gcc / clang!

- further fix undeclared identifier issues present for certain

generics context, in this case for the

|identifier when slicing - fix printing of uninitialized tensors. Instead of crashing these now print as "Unitialized Tensor[T] ...".

- fix CSV parsing regression (still used

Fdatafield access) and improved efficiency of the parser

- fix autograd code after changes in the Nim compiler from version 1.4 on. Requires to keep the procedure signatures using the base type and then convert to the specific type in the procedure (#528)

- fixes an issue when creating a laser tensor within a generic, in

which case one might see "undeclared identifier

rank"

- remove the MNIST download test from the default test cases, as it is too flaky for a CI

- fix issue #523 by fixing how

map_inlineworks for rank > 1 tensors. Access the data storage at correct indices instead of using the regular tensor[]accessor (which requires N indices for a rank N tensor)

- change least squares wrapper around

gelsdto have workspace computed by LAPACK and setrcondto default-1. But we also make it an argument for users to change (PR #520)

- add k-d tree (PR #447)

- add DBSCAN clustering (PR #413 / PR #518), thanks to @abieler

argsortnow has atoCopyargument to avoid sorting the argument tensor in place if not desiredcumsum,cumprodnow use axis 0 by default if no axis given- as part of DBSCAN + k-d tree a

distances.nimsubmodule was added that adds Manhattan, Minkowski, Euclidean and Jaccard distances nonzerowas added to get indices of non zero elements in a tensor

- fix memory allocation to not zero initialize the memory for tensors

(which we do manually). This made

newTensorUninitnot do what it was supposed to (PR #517). - add

vandermondematrix constructor (PR #519) - change

rcondargument togelsdfor linear least squares solver to use simpleepsilon(PR #519)

- fixes issue #459, ambiguity in

tanhactivation layer - fixes issue #514, make all examples compile again

- compile all examples during CI to avoid further regressions at a compilation level (thanks @Anuken)

Hotfix release fixing CUDA tensor printing. The code as pushed in #509 was broken.

This release is named after "Memories of Ice" (2001), the third book of Steven Erikson epic dark fantasy masterpiece "The Malazan Book of the Fallen".

Changes :

- Add

toUnsafeViewas replacement ofdataArrayto return aptr UncheckedArray - Doc generation fixes

cumsum,cumprod- Fix least square solver

- Fix mean square error backpropagation

- Adapt to upstream symbol resolution changes

- Basic Graph Convolution Network

- Slicing tutorial revamp

- n-dimensional tensor pretty printing

- Compilation fixes to handle Nim v1.0 to Nim devel

Thanks to @Vindaar for maintaining the repo, the docs, pretty-printing and answering many many questions on Discord while I took a step back. Thanks to @filipeclduarte for the cumsum/cumprod, @Clonkk for updating raw data accesses, @struggle for finding a bug in mean square error backprop, @timotheecour for spreading new upstream requirements downstream and @anon767 for Graph Neural Network.

Changes :

- Fancy Indexing (#434)

argmax_maxobsolete assert to 1D/2D removed. It refered to issues #183 and merged PR #171

Deprecation

- The dot in broadcasting and elementwise operators has changed place. This was not propagated to logical comparison

Use

==.,!=.,<=.,<.,>=.,>.instead of the previous order.==. This allows the broadcasting operators to have the same precedence as the natural operators. This also align Arraymancer with other Nim packages: Manu and NumericalNim

Overview (TODO)

This release integrates part of the Laser Backend (https://github.com/numforge/laser) that has been brewing since the end of 2018. The new backend provides the following features:

- Tensors can now either be a view over a memory buffer or manage the memory (like before).

The "view" allows zero-copy with libraries using the same

multi-dimensional array/tensor memory layout in particular Numpy,

PyTorch and Tensorflow or even image libraries. This can be achieved

using the new

fromBufferprocedures to create a tensor. - strings, ref types and types with non-trivial destructors will still always own and manager their memory buffer.

Trivial types (plain-old data) like integers, floats or complex can use the zero-copy scheme

by setting

isMemOwnerto false and then pointraw_bufferto the preallocated buffer. In that case, the memory must be freed manually to avoid memory leaks. - To keep the benefits of enforcing (im)mutabilility via the type system, procedures like

dataArraythat used to return raw pointers have been deleted or deprecated in favor of routines that returnRawImmutableViewandRawMutableViewwith only appropriate indexing or mutable indexing defined. This is an improvement over raw pointers. Note that at the moment there is no scheme like a borrow-checker to prevent users from using them even after the buffer has been invalidated (borrow-checking). In the futurelentwill be used to provide borrow-checking security.

Breaking changes

- In the past, it was mentioned in the README that Arraymancer supported up to 6 dimensions.

In reality up to 7 dimensions was possible. It has now been changed to 6 by default.

It is now possible to configure this via a compiler define

LASER_MAXRANKFor examplenim c -d:LASER_MAXRANK=16 path/to/appto support up to 16 dimensions. ornim c -d:LASER_MAXRANK=2 path/to/appif only 2 dimensions are ever needed and we want to save on stack space and optimize memory cache accesses. - The CpuStorage data structure has been completely refactored

- The routines

data,data=andtoRawSeqthat used to return theseqbacking the Tensor have been changed in a backward-incompatible way. They now return the canonical row-major representation of a tensor. With the change to a view and decoupling with a lower-level pointer based backend, Arraymancer does not track anymore the whole reserved memory and so cannot return the raw in-memory storage of the tensor. They have been deprecated. - Some procedures now have side-effects inherited from Nim's

allocSharedvariablefrom theautogradmodulesolvefrom thelinear_algebramodule

io_hdf5is not imported automatically anymore if the module is installed. The reason for this is that the HDF5 library runs code in global scope to initialize the HDF5 library. This means dead code elimination does not work and a binary will always depend on the HDF5 shared library if thenimhdf5is installed, even if not used. Simply import usingimport arraymancer/io/io_hdf5.

Deprecation

MetadataArrayis nowMetadatadataArrayhas been deprecated in favor on mutability-safeunsafe_raw_offset

This release is named after "Windwalkers" (2004, French "La Horde du Contrevent"), by Alain Damasio.

Changes:

- The

symeigproc to compute eigenvectors of a symmetric matrix now accepts an "uplo" char parameter. This allows to fill only the Upper or Lower part of the matrix, the other half is not used in computation. - Added

svd_randomized, a fast and accurate SVD approximation via random sampling. This is the standard driver for large scale SVD applications as SVD on large matrices is very slow. pcanow uses the randomized SVD instead of computing the covariance matrix. It can now efficiently deal with large scale problems. It now accepts acenter,n_oversamplesandn_power_itersarguments. Note thatpcawithout centering is equivalent to a truncated SVD.- LU decomposition has been added

- QR decomposition has been added

hilberthas been introduced. It creates the famous ill-conditioned Hilbert matrix. The matrix is suitable to stress test decompositions.- The

arangeprocedure has been introduced. It creates evenly spaced value within a specified range and step - The ordering of arguments to error functions has been converted to

(y_pred, y_target)(from (y_target, y_pred)), enabling the syntaxy_pred.accuracy_score(y). All existing error functions in Arraymancer were commutative w.r.t. to arguments so existing code will keep working. - a

solveprocedure has been added to solve linear system of equations represented as matrices. - a

softmaxlayer has been added to the autograd and neural networks complementing the SoftmaxCrossEntropy layer which fused softmax + Negative-loglikelihood. - The stochastic gradient descent now has a version with Momentum

Bug fixes:

gemmcould crash when the result was column major.- The automatic fusion of matrix multiplication with matrix addition

(A * X) + bcould update the b matrix. - Complex converters do not pollute the global namespace and do not

prevent string covnersion via

$of number types due to ambiguous call. - in-place division has been fixed, a typo made it into substraction.

- A conflict between NVIDIA "nanosecond" and Nim times module "nanosecond" preventing CUDA compilation has been fixed

Breaking

- In

symeig, theeigenvectorsargument is now calledreturn_eigenvectors. - In

symeigwith slice, the newuploprecedes the slice argument. - pca input "nb_components" has been renamed "n_components".

- pca output tuple used the names (results, components). It has been renamed to (projected, components).

- A

pcaoverload that projected a data matrix on already existing principal axes was removed. Simply multiply the mean-centered data matrix with the loadings instead. - Complex converters were removed. This prevents hard to debug and workaround implicit conversion bug in downstream library. If necessary, users can reimplement converters themselves. This also provides a 20% boost in Arraymancer compilation times

Deprecation:

- The syntax gemm(A, B, C) is now deprecated. Use explicit "gemm(1.0, A, B, 0.0, C)" instead. Arguably not zero-ing C could also be a reasonable default.

- The dot in broadcasting and elementwise operators has changed place

Use

+.,*.,/.,-.,^.,+.=,*.=,/.=,-.=,^.=instead of the previous order.+and.+=. This allows the broadcasting operators to have the same precedence as the natural operators. This also align Arraymancer with other Nim packages: Manu and NumericalNim

Thanks to @dynalagreen for the SGD with Momentum, @xcokazaki for spotting the in-place division typo, @Vindaar for fixing the automatic matrix multiplication and addition fusion, @Imperator26 for the Softmax layer, @brentp for reviewing and augmenting the SVD and PCA API, @auxym for the linear equation solver and @berquist for the reordering all error functions to the new API. Thanks @b3liever for suggesting the dot change to solve the precedence issue in broadcasting and elementwise operators.

Changes affecting backward compatibility:

- None

Changes:

- 0.20.x compatibility (commit 0921190)

- Complex support

Einsum- Naive whitespace tokenizer for NLP

- Preview of Laser backend for matrix multiplication without SIMD autodetection (already 5x faster on integer matrix multiplication)

Fix:

- Fix height/width order when reading an image in tensor

Thanks to @chimez for the complex support and updating for 0.20, @metasyn for the tokenizer, @xcokazaki for the image dimension fix and @Vindaar for the einsum implemention

This release is named after "Sign of the Unicorn" (1975), the third book of Roger Zelazny masterpiece "The Chronicles of Amber".

Changes affecting backward compatibility:

- PCA has been split into 2

- The old PCA with input

pca(x: Tensor, nb_components: int)now returns a tuple of result and principal components tensors in descending order instead of just a result - A new PCA

pca(x: Tensor, principal_axes: Tensor)will project the input x on the principal axe supplied

- The old PCA with input

Changes:

-

Datasets:

- MNIST is now autodownloaded and cached

- Added IMDB Movie Reviews dataset

-

IO:

- Numpy file format support

- Image reading and writing support (jpg, bmp, png, tga)

- HDF5 reading and writing

-

Machine learning

- Kmeans clustering

-

Neural network and autograd:

- Support substraction, sum and stacking in neural networks

- Recurrent NN: GRUCell, GRU and Fused Stacked GRU support

- The NN declarative lang now supports GRU

- Added Embedding layer with up to 3D input tensors [batch_size, sequence_length, features] or [sequence_length, batch_size, features]. Indexing can be done with any sized integers, byte or chars and enums.

- Sparse softmax cross-entropy now supports target tensors with indices of type: any size integers, byte, chars or enums.

- Added ADAM optimiser (Adaptative Moment Estimation)

- Added Hadamard product backpropagation (Elementwise matrix multiply)

- Added Xavier Glorot, Kaiming He and Yann Lecun weight initialisations

- The NN declarative lang automatically initialises weights with the following scheme:

- Linear and Convolution: Kaiming (suitable for Relu activation)

- GRU: Xavier (suitable for the internal tanh and sigmoid)

- Embedding: Not supported in declarative lang at the moment

-

Tensors:

- Add tensor splitting and chunking

- Fancy indexing via

index_select - division broadcasting, scalar division and multiplication broadcasting

- High-dimensional

toSeqexports

-

End-to-end Examples:

- Sequence/mini time-series classification example using RNN

- Training and text generation example with Shakespeare and Jane Austen work. This can be applied to any text-based dataset (including blog posts, Latex papers and code). It should contain at least 700k characters (0.7 MB), this is considered small already.

-

Important fixes:

- Convolution shape inference on non-unit strided convolutions

- Support the future OpenMP changes from nim#devel

- GRU: inference was squeezing all singleton dimensions instead of just the "layer" dimension.

- Autograd: remove pointers to avoid pointing to wrong memory when the garbage collector moves it under pressure. This unfortunately comes at the cost of more GC pressure, this will be addressed in the future.

- Autograd: remove all methods. They caused issues with generic instantiation and object variants.

Special thanks to @metasyn (MNIST caching, IMDB dataset, Kmeans) and @Vindaar (HDF5 support and the example of using Arraymancer + Plot.ly) for their large contributions on this release.

Ecosystem:

-

Using Arraymancer + Plotly for NN training visualisation: https://github.com/Vindaar/NeuralNetworkLiveDemo

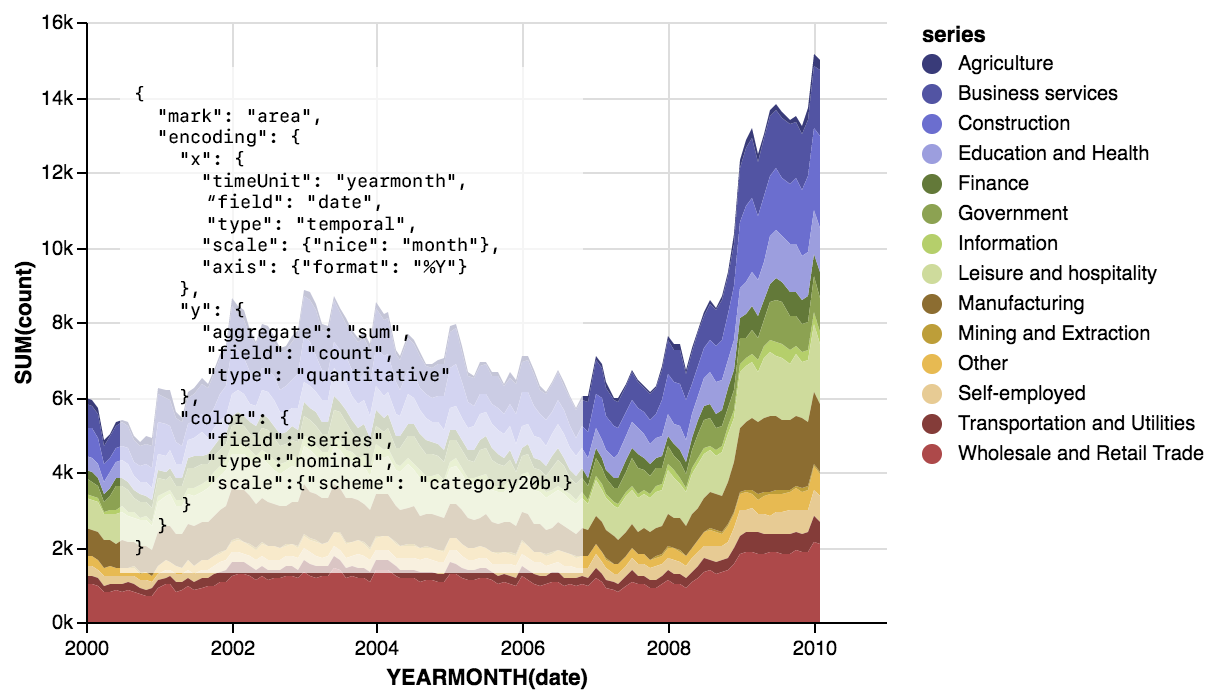

-

Monocle, proof-of-concept data visualisation in Nim using Vega. Hopefully allowing this kind of visualisation in the future:

and compatibility with the Vega ecosystem, especially the Tableau-like Voyager.

-

Agent Smith, reinforcement learning framework. Currently it wraps the

Arcade Learning Environmentfor practicing reinforcement learning on Atari games. In the future it will wrap Starcraft 2 AI bindings and provides a high-level interface and examples to reinforcement learning algorithms. -

Laser, the future Arraymancer backend which provides:

- SIMD intrinsics

- OpenMP templates with fine-grained control

- Runtime CPU features detection for ARM and x86

- A proof-of-concept JIT Assembler

- A raw minimal tensor type which can work as a view to arbitrary buffers

- Loop fusion macros for iteration on an arbitrary number of tensors. As far as I know it should provide the fastest multi-threaded iteration scheme on strided tensors all languages and libraries included.

- Optimized reductions, exponential and logarithm functions reaching 4x to 10x the speed of naively compiled for loops

- Optimised parallel strided matrix multiplication reaching 98% of OpenBLAS performance

- This is a generic implementation that can also be used for integers

- It will support preprocessing (relu_backward, tanh_backward, sigmoid_backward) and epilogue (relu, tanh, sigmoid, bias addition) operation fusion to avoid looping an extra time with a memory bandwidth bound pass.

- Convolutions will be optimised with a preprocessing pass fused into matrix multiplication. Traditional

im2colsolutions can only reach 16% of matrix multiplication efficiency on the common deep learning filter sizes - State-of-the art random distributions and random sampling implementations for stochastic algorithms, text generation and reinforcement learning.

Future breaking changes.

-

Arraymancer backend will switch to

Laserfor next version. Impact:- At a low-level CPU tensors will become a view on top of a pointer+len fon old data types instead of using the default Nim seqs. This will enable plenty of no-copy use cases and even using memory-mapped tensors for out-of-core processing. However libraries relying on teh very low-level representation of tensors will break. The future type is already implemented in Laser.

- Tensors of GC-allocated types like seq, string and references will keep using Nim seqs.

- While it was possible to use the Javascript backend by modifying the iteration scheme this will not be possible at all. Use JS->C FFI or WebAssembly compilation instead.

- The inline iteration templates

map_inline,map2_inline,map3_inline,apply_inline,apply2_inline,apply3_inline,reduce_inline,fold_inline,fold_axis_inlinewill be removed and replace byforEachandforEachStagedwith the following syntax:

forEach x in a, y in b, z in c: x += y * z

Both will work with an arbitrary number of tensors and will generate 2x to 3x more compact code wile being about 30% more efficient for strided iteration. Furthermore

forEachStagedwill allow precise control of the parallelisation strategy including pre-loop and post-loop synchronisation with thread-local variables, locks, atomics and barriers. The existing higer-order functionsmap,map2,apply,apply2,fold,reducewill not be impacted. For small inlinable functions it will be recommended to use theforEachmacro to remove function call overhead (Yyou can't inline a proc parameter). -

The neural network domain specific language will use less magic for the

forwardproc. Currently the neural net domain specific language only allows the typeVariable[T]for inputs and the result. This prevents its use with embedding layers which also requires an index input. Furthermore this prevents usingtuple[output, hidden: Variable]result type which is very useful to pass RNNs hidden state for generative neural networks (for example text sequence or time-series). So unfortunately the syntax will go from the currentforward x, y:shortcut to classic Nimproc forward[T](x, y: Variable[T]): Variable[T] -

Once CuDNN GRU is implemented, the GRU layer might need some adjustments to give the same results on CPU and Nvidia's GPU and allow using GPU trained weights on CPU and vice-versa.

Thanks:

- metasyn: Datasets and Kmeans clustering

- vindaar: HDF5 support and Plot.ly demo

- bluenote10: toSeq exports

- andreaferetti: Adding axis parameter to Mean layer autograd

- all the contributors of fixes in code and documentation

- the Nim community for the encouragements

This release is named after "The Name of the Wind" (2007), the first book of Patrick Rothfuss masterpiece "The Kingkiller Chronicle".

Changes:

-

Core:

- OpenCL tensors are now available! However Arraymancer will naively select the first backend available. It can be CPU, it can be GPU. They support basic and broadcasted operations (Addition, matrix multiplication, elementwise multiplication, ...)

- Addition of an

argmaxandargmax_maxprocs.

-

Datasets:

- Loading the MNIST dataset from http://yann.lecun.com/exdb/mnist/

- Reading and writing from CSV

-

Linear algebra:

- Least squares solver

- Eigenvalues and eigenvectors decomposition for symmetric matrices

-

Machine Learning

- Principal Component Analysis (PCA)

-

Statistics

- Computation of covariance matrices

-

Neural network

- Introduction of a short intuitive syntax to build neural networks! (A blend of Keras and PyTorch).

- Maxpool2D layer

- Mean Squared Error loss

- Tanh and softmax activation functions

-

Examples and tutorials

- Digit recognition using Convolutional Neural Net

- Teaching Fizzbuzz to a neural network

-

Tooling

- Plotting tensors through Python

Several updates linked to Nim rapid development and several bugfixes.

Thanks:

- Bluenote10 for the CSV writing proc and the tensor plotting tool

- Miran for benchmarking

- Manguluka for tanh

- Vindaar for bugfixing

- Every participants in RFCs

- And you user of the library.

This release is named after "Wizard's First Rule" (1994), the first book of Terry Goodkind masterpiece "The Sword of Truth".

I am very excited to announce the third release of Arraymancer which includes numerous improvements, features and (unfortunately!) breaking changes. Warning ⚠: Deprecated ALL procs will be removed next release due to deprecated spam and to reduce maintenance burden.

Changes:

-

Very Breaking

- Tensors uses reference semantics now:

let a = bwill share data by default and copies must be made explicitly.- There is no need to use

unsafeproc to avoid copies especially for slices. - Unsafe procs are deprecated and will be removed leading to a smaller and simpler codebase and API/documentation.

- Tensors and CudaTensors now works the same way.

- Use

cloneto do copies. - Arraymancer now works like Numpy and Julia, making it easier to port code.

- Unfortunately it makes it harder to debug unexpected data sharing.

- There is no need to use

- Tensors uses reference semantics now:

-

Breaking (?)

- The max number of dimensions supported has been reduced from 8 to 7 to reduce cache misses. Note, in deep learning the max number of dimensions needed is 6 for 3D videos: [batch, time, color/feature channels, Depth, Height, Width]

-

Documentation

- Documentation has been completely revamped and is available here: https://mratsim.github.io/Arraymancer/

-

Huge performance improvements

- Use non-initialized seq

- shape and strides are now stored on the stack

- optimization via inlining all higher-order functions

apply_inline,map_inline,fold_inlineandreduce_inlinetemplates are available.

- all higher order functions are parallelized through OpenMP

- integer matrix multiplication uses SIMD, loop unrolling, restrict and 64-bit alignment

- prevent false sharing/cache contention in OpenMP reduction

- remove temporary copies in several proc

- runtime checks/exception are now behind

unlikely A*B + CandC+=A*Bare automatically fused in one operation- do not initialized result tensors

-

Neural network:

- Added

linear,sigmoid_cross_entropy,softmax_cross_entropylayers - Added Convolution layer

- Added

-

Shapeshifting:

- Added

unsqueezeandstack

- Added

-

Math:

- Added

min,max,abs,reciprocal,negateand in-placemnegateandmreciprocal

- Added

-

Statistics:

- Added variance and standard deviation

-

Broadcasting

- Added

.^(broadcasted exponentiation)

- Added

-

Cuda:

- Support for convolution primitives: forward and backward

- Broadcasting ported to Cuda

-

Examples

- Added perceptron learning

xorfunction example

- Added perceptron learning

-

Precision

- Arraymancer uses

ln1p(ln(1 + x)) andexp1mprocs (exp(1 - x)) where appropriate to avoid catastrophic cancellation

- Arraymancer uses

-

Deprecated

- Version 0.3.1 with the ALL deprecated proc removed will be released in a week. Due to issue nim-lang/Nim#6436,

even using non-deprecated proc like

zeros,ones,newTensoryou will get a deprecated warning. newTensor,zeros,onesarguments have been changed fromzeros([5, 5], int)tozeros[int]([5, 5])- All

unsafeproc are now default and deprecated.

- Version 0.3.1 with the ALL deprecated proc removed will be released in a week. Due to issue nim-lang/Nim#6436,

even using non-deprecated proc like

This release is named after "The Colour of Magic" (1983), the first book of Terry Pratchett masterpiece "Discworld".

I am very excited to announce the second release of Arraymancer which includes numerous improvements blablabla ...

Without further ado:

-

community

- There is a Gitter room!

-

Breaking

shallowCopyis nowunsafeViewand acceptsletarguments- Element-wise multiplication is now

.*instead of|*| - vector dot product is now

dotinstead of.*

-

Deprecated

- All tensor initialization proc have their

Backendparameter deprecated. fmapis nowmapaggandagg_in_placeare nowfoldand nothing (too bad!)

- All tensor initialization proc have their

-

Initial support for Cuda !!!

- All linear algebra operations are supported

- Slicing (read-only) is supported

- Transforming a slice to a new contiguous Tensor is supported

-

Tensors

- Introduction of

unsafeoperations that works without copy:unsafeTranspose,unsafeReshape,unsafebroadcast,unsafeBroadcast2,unsafeContiguous, - Implicit broadcasting via

.+, .*, ./, .-and their in-place equivalent.+=, .-=, .*=, ./= - Several shapeshifting operations:

squeeze,atand theirunsafeversion. - New property:

size - Exporting:

export_tensorandtoRawSeq - Reduce and reduce on axis

- Introduction of

-

Ecosystem:

- I express my deep thanks to @edubart for testing Arraymancer, contributing new functions, and improving its overall performance. He built arraymancer-demos and arraymancer-vision,check those out you can load images in Tensor and do logistic regression on those!

Also thanks to the Nim community on IRC/Gitter, they are a tremendous help (yes Varriount, Yardanico, Zachary, Krux). I probably would have struggled a lot more without the guidance of Andrea's code for Cuda in his neo and nimcuda library. And obviously Araq and Dom for Nim which is an amazing language for performance, productivity, safety and metaprogramming.

This release is named after "Magician: Apprentice" (1982), the first book of Raymond E. Feist masterpiece "The Riftwar Cycle".

First public release.

Include:

- converting from deep nested proc or array

- Slicing, and slice mutation

- basic linear algebra operations,

- reshaping, broadcasting, concatenating,

- universal functions

- iterators (in-place, axis, inline and closure versions)

- BLAS and BLIS support for fast linear algebra